E-E-A-T stopped being a rater rubric in 2024 and became an entity-graph problem in 2026; the sites that solved the author chain are the ones AI Overviews still cite.

The doctrine became infrastructure

Three numbers anchor the 2026 picture. Google added Experience to E-A-T on December 15, 2022, formally publishing the change in its Search Quality Rater Guidelines and on the Search Central blog. The Helpful Content System merged into core ranking in March 2024, retiring the standalone classifier and distributing helpfulness assessment across multiple core systems. The December 2025 broad core update extended E-E-A-T-style scrutiny beyond Your Money or Your Life pages into lifestyle, SaaS, e-commerce, and reviews – the categories most operators assumed were safe.

For lead generation sites, that combination produced a quiet earthquake. The Search Quality Rater Guidelines remain a 168-page document used to calibrate human raters rather than rank pages directly. But the language of E-E-A-T has been absorbed into ranking systems that do affect placement, and into the AI Overview generation pipeline that decides which sources get cited above the fold. The distinction between rubric and ranking factor narrowed to the point where treating it as binary became operationally wrong.

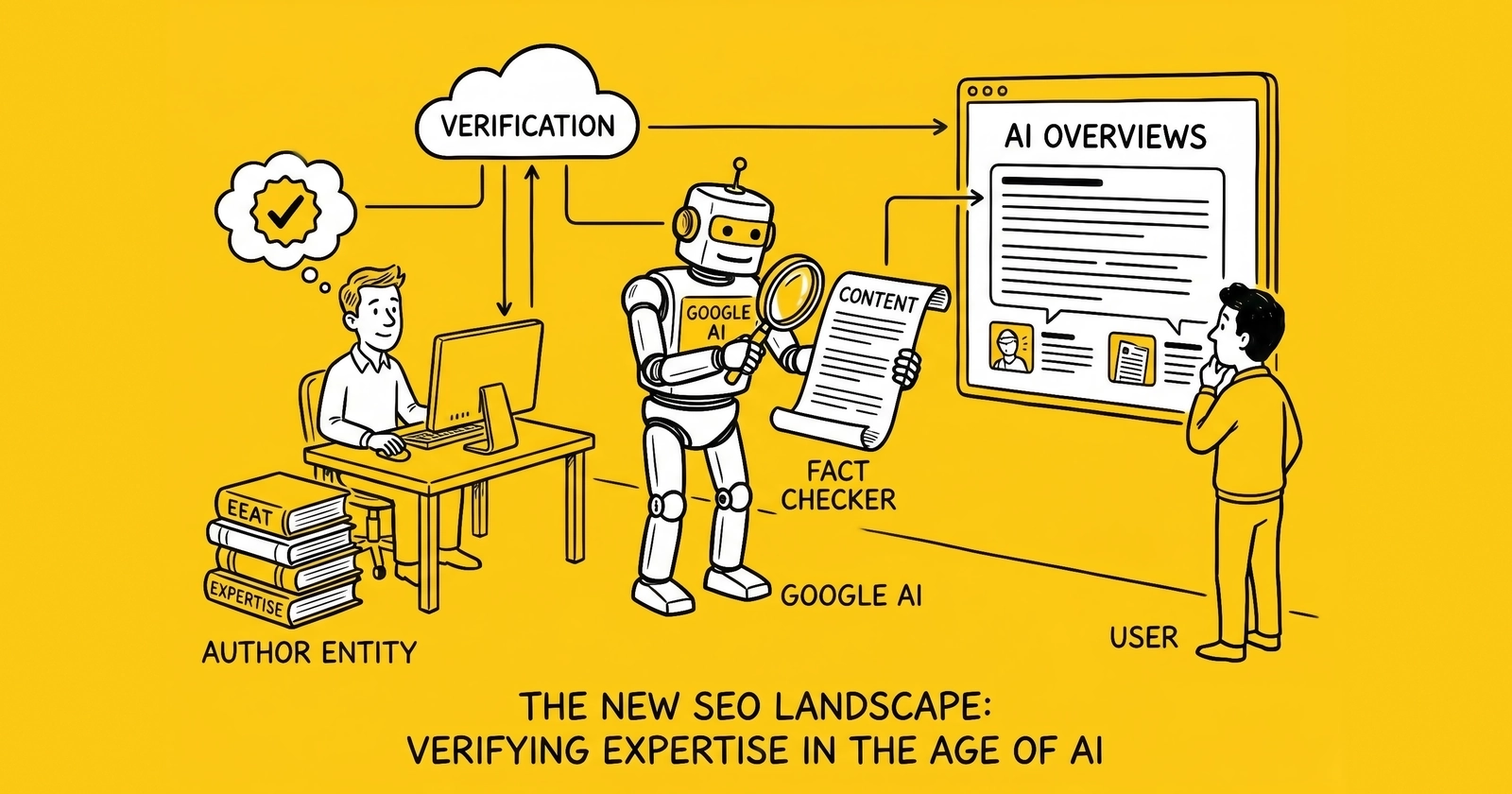

The mechanical change in 2026 sits on top of that doctrinal shift. AI Overviews and other generative answer surfaces favor content from entities Google has high confidence in. Confidence in this context is not a vibe – it is a graph traversal that reaches Wikidata, Wikipedia, LinkedIn, ORCID, and similar registries to verify that the author claimed in schema is the same author identified elsewhere on the open web. Sites with thin or invented author entities lose citation share to sites with sameAs chains that resolve.

Lead-gen operators face the sharpest version of this test for three reasons. The vertical is YMYL by definition because it routes consumers into insurance, mortgage, Medicare, legal, and personal finance decisions. Single-author sites dominate the long tail, which means the entire E-E-A-T weight of a domain often rests on one Person entity. And TCPA exposure adds a financial penalty layer – $500 to $1,500 per violation under 47 U.S.C. § 227 – that turns trust signal failures into more than a ranking problem.

The rest of this analysis traces how the framework arrived at this point, what the four signals look like once they leave rater training and enter machine evaluation, why the author-entity chain is now the load-bearing element, and what a 90-day operator playbook covers. The argument throughout is mechanical, not philosophical. E-E-A-T in 2026 is something a site either has plumbed correctly or it does not.

From 2014 acronym to 2026 entity verification

E-A-T entered Google’s vocabulary in 2014 as part of the Search Quality Rater Guidelines, framed as Expertise, Authoritativeness, and Trustworthiness. For nearly a decade it sat as a rater concept used by Google’s external evaluators when scoring search results during algorithm tests. Operators who watched the guidelines closely treated E-A-T as a directional signal – useful for understanding what Google rewarded, but indirect.

The first major shift arrived on December 15, 2022, when Google added Experience as a fourth pillar. The official Search Central blog post framed Experience as first-hand or life experience with the topic, distinct from formal expertise. A product reviewer who has actually used the product, a travel writer who has visited the destination, a tax preparer who has filed the specific form – each demonstrates Experience in a way that pure desk research cannot. Trust moved to the center of the diagram with the explicit caveat that untrustworthy pages have low E-E-A-T regardless of how Experienced, Expert, or Authoritative they may otherwise appear.

That reframing mattered because it made the framework auditable along a new axis. Rater guidelines could now be interpreted as asking: did the author personally encounter what they describe? For lead generation, the implication was specific and uncomfortable. Generic vertical guides written by anonymous content marketers – the dominant production model for SEO traffic in 2018 to 2022 – sat at the wrong end of every Experience criterion the new guidelines established.

The second shift was structural rather than rhetorical. In March 2024, Google integrated the Helpful Content System into core ranking. Before integration, the HCS operated as a standalone classifier with a single signal that could lift or suppress entire sites. After integration, helpfulness assessment dispersed across multiple core ranking systems, each evaluating different facets of E-E-A-T-aligned quality. The practical consequence was that recovery from a helpful-content hit no longer waited for a discrete classifier refresh; recoveries and demotions could now propagate continuously.

The third shift is where 2026 lives. AI Overviews launched broadly in May 2024 and matured through 2025 into a primary answer surface for informational queries. Sites that AI Overviews cite see traffic – Google’s own attribution for AI-driven referrals. Sites that AI Overviews ignore lose the click before the SERP loads. The mechanism by which AI Overviews decide whom to cite is not fully published, but the signals it relies on overlap heavily with E-E-A-T as expressed through structured data and entity confidence. Google’s March 2026 spam update – a SpamBrain refinement targeting scaled content abuse, where sites publish large volumes of AI-generated pages without editorial oversight – sharpened the penalty side of the same coin. Combined with the still-active site-reputation-abuse policy introduced in March 2024 (and tightened through November 2024), enforcement systems now downgrade two distinct patterns: third-party content riding a host domain’s authority, and unsupervised AI-scaled content riding any domain. Both patterns share a structural tell: an absent or unverifiable author entity.

The arc, then: E-A-T in 2014 was a label raters applied. E-E-A-T in 2022 became a four-axis rubric with Trust at the center. By 2024 it was distributed across core ranking. By 2026 it is something AI systems verify mechanically against external entity graphs. Each step removed degrees of interpretive freedom. The next shift is not another acronym change – it is full migration from rubric to checkable claim.

The four signals, re-read for machine evaluation

Each of the four E-E-A-T pillars carries a different evaluation pattern in 2026. Reading them through the lens of machine verification – rather than rater interpretation – clarifies what operators must produce.

Experience. The signal most resistant to fakery and the hardest to assert in schema. Experience shows up in content as specific operational detail: which platforms were used, which campaigns ran, which dollar figures applied, which mistakes occurred. Generative content struggles here because plausible synthetic detail rarely matches verifiable reality. The schema-level proxy for Experience is weak; the prose-level proxy is strong. AI Overviews and ranking systems pick up Experience indirectly by detecting density of concrete numbers, named tools, dated events, and procedural specifics – markers that correlate with first-hand involvement.

Expertise. Demonstrated through credential verifiability and topic depth. Where Experience says “the author did this,” Expertise says “the author is qualified to interpret this.” For YMYL topics, Expertise is increasingly graph-checkable: does the named author have a credential that resolves through ORCID, a state license registry, a board certification database, or an industry association directory? Lead-gen practitioners often hold platform certifications (ActiveProspect, Phonexa) and conference speaker histories (LeadsCon) that are verifiable through their own URLs even without academic credentials. Schema’s Person type accommodates this through hasCredential and knowsAbout properties combined with sameAs links to credentialing bodies.

Authoritativeness. A property of recognition rather than internal claim. Backlinks from industry publications, mentions in trade journalism, citations in trade-association reports, and inclusion in Wikipedia or Wikidata all contribute. The 2026 wrinkle is that authority signals now weight toward entity-anchored mentions over link-only mentions. A passing backlink from a high-authority domain helps. A backlink from a high-authority domain that explicitly names the author with consistent sameAs metadata helps more, because the citing domain reinforces the entity claim.

Trustworthiness. Google’s stated foundation of the entire model. Trust is multi-layered in 2026: factual accuracy backed by primary-source citations; site-level trust signals such as HTTPS, transparent business address, named editorial leadership, and disclosure pages; and operational trust signals such as TrustedForm or Jornaya integration for lead generation specifically. The relationship to the other three pillars is hierarchical rather than additive – a trust failure overrides any amount of Experience, Expertise, or Authority.

The table below maps each signal to the verification source most likely to be used by a machine evaluator in 2026.

| E-E-A-T Signal | Primary Verification Source | Schema Anchor | Lead-Gen Specific Proxy |

|---|---|---|---|

| Experience | Article prose density of concrete operational detail | Article.author.knowsAbout, body content | Specific CPL figures, named platforms, dated campaigns |

| Expertise | Credential databases, ORCID, state licenses, certifications | Person.hasCredential, Person.knowsAbout | ActiveProspect/Phonexa cert pages, LeadsCon speaker list |

| Authoritativeness | Wikipedia, Wikidata, industry publication mentions | Person.sameAs, citing-domain backlinks | Trade-press bylines, podcast guest archives, conference programs |

| Trustworthiness | HTTPS, primary-source citations, disclosure pages, business registration | Site-level metadata, Article.citation | TrustedForm/Jornaya integration disclosure, TCPA compliance pages |

The pattern across the column is consistent: each signal becomes stronger the more its assertion can be cross-checked against an external source the operator does not control. Self-asserted authority is weak by definition. Externally verifiable authority is the unit of currency.

For an end-to-end view of how lead-gen sites translate these signals into operational practice, the E-E-A-T trust signals guide covers the underlying compliance and credibility architecture in detail.

Why HCS integration made E-E-A-T enforceable

Before March 2024, the Helpful Content System operated as a single classifier producing a sitewide signal. Sites deemed unhelpful received a suppression that affected most or all of their content. Recovery required Google to refresh the classifier – which happened on a schedule outside operator control, sometimes with months between refreshes. The operational pattern was: bad content gets a sitewide tag, the tag stays until refresh, the site recovers or does not.

Integration into core changed three things at once. First, the single classifier became multiple signals dispersed across core ranking systems. Second, those signals now evaluate continuously rather than waiting for refresh cycles, so demotions and recoveries propagate as content changes rather than as scheduled events. Third, the integration allowed E-E-A-T-aligned heuristics – author authority, citation density, source quality, topic-author alignment – to participate in ranking decisions at the page level rather than only at the site level.

The practical consequence for operators: a single article with weak E-E-A-T signals can suppress its own ranking even when the rest of the site is healthy. Conversely, a single article with strong author and citation chains can rank when the rest of the site is mediocre. Pre-integration, E-E-A-T was a sitewide weather system. Post-integration, it is per-page weather.

The December 2025 broad core update widened that effect by extending E-E-A-T-style scrutiny beyond YMYL into categories that previously coasted on different signals. SaaS comparison pages, lifestyle reviews, e-commerce product roundups, and news verticals all started behaving like compliance-grade content under the December update – meaning author identity, primary-source citations, and disclosure clarity moved from optional to expected.

For lead generation, the implication ran in two directions simultaneously. The category was already YMYL, so the elevated standards already applied. But the December update meant that adjacent categories – lifestyle finance content, comparison-engine reviews, vertical news – now operated under similar rules, narrowing the gap between regulated and unregulated content production. Operators who had built lead-gen E-E-A-T programs found those programs increasingly transferable; operators who had not faced compounding pressure on multiple content lines.

The March 2026 spam update layered enforcement onto the same foundation. It was a SpamBrain refinement that explicitly named scaled content abuse – sites publishing large volumes of AI-generated pages without editorial oversight – as a primary target, with affected domains reporting 50-80% traffic drops. The update added no new policy categories; it sharpened detection within existing ones. The site-reputation-abuse policy introduced in March 2024, and tightened in November 2024 to cover any third-party content whose main purpose is exploiting host signals, remains active alongside it and is still enforced through manual actions.

For lead generation, two enforcement patterns now run in parallel. The first is the older site-reputation-abuse pattern: unrelated content hosted under a primary brand to capture rankings outside the brand’s natural domain expertise – a lead-gen site operating in auto insurance that publishes a sudden block of content on cryptocurrency, with no author credentials in crypto and no sameAs chain pointing to crypto expertise. The second, sharpened in March 2026, is scaled AI-generated content with no human editorial accountability – articles produced en masse without a named, verifiable author whose expertise tracks the topic.

The remedy is the same remedy for both patterns: keep author entities, content topics, and domain identity aligned, and ensure every published article has a real human editorial owner. That alignment is exactly what author-entity verification produces.

The author-entity chain – Person schema and the sameAs ladder

The mechanical core of 2026 E-E-A-T is a Person entity in schema with a sameAs property pointing to authoritative external profiles. The richer and more verifiable the sameAs chain, the higher the entity’s confidence score in the systems that decide AI Overview citation, knowledge panel display, and ranking weight for author-attributed content.

A correctly assembled author Person entity carries the following structured fields at minimum: name, url (the canonical author page on the site), image (a professional headshot at a stable URL), jobTitle, worksFor (an Organization @id reference), description (one to two sentences of substantive bio), and sameAs (an array of external profile URLs). Optional but high-value additions include knowsAbout (an array of topic strings or DefinedTerm references), hasCredential (EducationalOccupationalCredential references for certifications), and alumniOf for academic affiliations.

The sameAs array is where verification happens. Each URL in the array is a claim that the entity Google sees on the article page is the same entity described at the external URL. The systems consuming this data – Search, AI Overviews, the knowledge graph – traverse those links to confirm the claim. The hierarchy of weight, in rough order:

- Wikidata Q-ID URL. The highest-weight reference because Wikidata is the canonical entity graph Google uses for knowledge panel resolution. A Q-ID with sufficient statements (occupation, employer, notable works) and external identifiers establishes the entity as a graph node.

- Wikipedia article URL. Indicates editorial-grade notability. Most lead-gen authors will not have one; that is fine, but having one is decisive when it exists.

- ORCID identifier. The standard for academic and research authors. Less common in lead generation but high-weight when present, and trivially easy to obtain.

- LinkedIn profile URL. The closest thing lead-gen operators have to a universal verification anchor. Public profiles with consistent name, headshot, employer, and posting history are easy to cross-check.

- Industry-specific authoritative profiles. Conference speaker pages (LeadsCon, Affiliate Summit), trade-association directories (LeadsCouncil, Performance Marketing Association), platform certification badges, and SEC EDGAR pages for executives of public-reporting companies.

- Owned-property profiles. Personal websites, podcast host pages, and similar profiles owned by the author. These carry less weight because they are self-asserted, but they reinforce the chain when linked bidirectionally.

The chain’s value depends on internal consistency. If an author’s site bio claims fifteen years of insurance lead-gen experience, the LinkedIn profile shows a different industry, and the Wikidata item lists a third occupation, the chain weakens rather than strengthens. Confidence emerges from agreement across nodes; disagreement triggers downgrades. This is why audits matter and why hand-built chains often outperform automated ones – automation pulls whatever profile data exists rather than ensuring profile data agrees.

For YMYL verticals specifically, credibility weight differs substantially by sameAs target. The table below reflects observed weighting patterns for YMYL author verification through 2025 to 2026, based on AI citation pattern analysis across financial, medical, and legal content.

| YMYL Vertical | Highest-Weight sameAs Targets | Secondary Targets | Specific to Vertical |

|---|---|---|---|

| Insurance / Financial | LinkedIn, Wikidata, FINRA BrokerCheck, state DOI license lookup | Industry association directories, conference speaker pages | NAIC member registries, AMBest analyst rosters |

| Medical / Healthcare | ORCID, NPI registry, state medical board, hospital staff page | PubMed author profile, board certification database | ABMS Certification Matters, specialty society directories |

| Legal | State bar directory, Avvo profile, ORCID | Law firm bio page, court-clerk records | Martindale-Hubbell, Super Lawyers profile |

| Lead-gen operator (cross-vertical) | LinkedIn, Wikidata, conference speaker pages | Platform certification pages, podcast guest archives | LeadsCouncil membership, ActiveProspect/Phonexa directories |

The pattern in the right two columns matters because lead-gen authors writing across YMYL verticals need to stack vertical-specific verification alongside cross-vertical anchors. A lead-gen practitioner who writes about insurance, mortgage, and Medicare leads should ideally have visibility across multiple of these registries rather than relying on LinkedIn alone.

For a deeper treatment of how Person and Organization entities compose into the broader knowledge graph, the entity graph schema guide covers the full architecture. The connection point with E-E-A-T is straightforward: the author entity is one node in a graph that also contains the publishing organization, the topics covered, and the citations made. Each node strengthens the others when cross-referenced cleanly.

AI Overviews and author authority

AI Overviews changed the visibility math by inserting a generative summary above the traditional ten blue links, citing a small number of source URLs. Citation share replaces ranking share as the primary visibility metric for queries where AI Overviews appears, which by mid-2026 is a majority of informational queries in YMYL categories.

The selection mechanism for which URLs get cited is not fully published. Observed patterns across the 2025 to 2026 window suggest the system weights a combination of: classical ranking position; structured data presence and validity; entity confidence in the author and publisher; citation density and primary-source orientation; topical alignment between the author entity’s knowsAbout profile and the query topic; and site-level trust signals such as About page completeness, disclosure presence, and content freshness.

Author authority enters this mix at multiple points. The author’s Person entity contributes to entity confidence directly. The match between author knowsAbout and query topic affects topical alignment scoring. The citation density a verified author tends to produce – because they cite primary sources at higher rates – reinforces the page-level signal. None of these inputs alone determines citation; the combination does.

The compounding effect is what operators should focus on. A single article with strong author entity, strong citation density, and clean topical alignment can outperform articles ranking higher in classical SERP for AI Overview citation purposes. Conversely, a high-ranking page from a domain with anonymous authorship can lose AI Overview slots to lower-ranking pages with verified authors. This decoupling between rank and citation is the structural change that 2026 introduced.

The implication for production strategy: treating AI Overviews as a downstream artifact of conventional SEO produces poor outcomes. The author entity, schema chain, and citation discipline must be treated as primary surfaces, with conventional ranking as a separate but related concern. For sites that already invested in LLMO citation engineering, the author-entity layer is the missing piece – schema and content optimization without verifiable authorship leaves citation share on the table.

The March 2026 spam update adds a defensive component to this picture. AI Overviews systems appear sensitive to author-domain alignment in ways enforcement signals reinforce. The update’s explicit focus on scaled content abuse – high-volume AI-generated pages without human editorial oversight – penalizes pages that lack a verifiable author entity entirely. Separately, the still-active site-reputation-abuse policy from March 2024 penalizes pages whose author-domain alignment is wrong on a different axis: an article on cryptocurrency leads, hosted on an auto-insurance domain, written by an author whose entire sameAs chain points to insurance expertise, looks anomalous on topical alignment even when the content is competent. The remedy in both cases is structural: match real human authors to verticals through the entity layer, and avoid publishing without a named author whose expertise tracks the topic.

Lead-gen specifics – single-author sites and byline schema

Lead generation sites cluster around a specific operational pattern that interacts unusually with E-E-A-T mechanics. The dominant model is the single-author or small-author site: a domain operator who writes most content, occasionally with one or two contributors. This pattern stands in contrast to the editorial-board model used by news publishers and the multi-author thought-leadership model used by enterprise SaaS.

The single-author model carries advantages and exposure points. The advantage is concentration of E-E-A-T weight: every article reinforces the same author entity, every byline strengthens the same sameAs chain, and citations across articles compound at the entity level. A single-author site that runs the playbook correctly produces an unusually strong author entity for its domain age. The exposure point is fragility: any weakness in the author chain affects every article on the site, and any topical drift away from the author’s verified expertise penalizes proportionally more content.

Byline schema implementation for single-author sites should follow a consistent pattern across every article. The author Person entity gets defined once, ideally at a stable @id at the canonical author page (/author/[slug]/#person), and referenced from every article via Article.author: { @id: "..." }. This avoids duplication and lets the entity definition mature in one place rather than fragmenting across articles. Updates to credentials, sameAs additions, or knowsAbout revisions propagate automatically.

For sites with multiple contributors, the same pattern extends with one Person @id per author and Article schema pointing to the correct one per byline. The discipline that matters here is not adding a generic “Editorial Team” placeholder for any article whose specific author is unclear – that placeholder is exactly the anonymous authorship pattern AI Overviews discount. Either name the author or do not publish until the author is namable.

The TCPA layer adds a financial dimension absent from most other E-E-A-T discussions. TCPA violations carry $500 to $1,500 statutory damages per call or text, and class-action exposure regularly reaches eight figures. Author-entity verification interacts with TCPA defense through trust signal coherence: a domain that publishes detailed, dated, authored compliance content is in a structurally stronger position when defending against bad-faith litigation claims than a domain with anonymous compliance pages. The byline schema is part of the documentary record.

The further connection runs through transparency pages. A site whose author page lists specific TCPA training, ActiveProspect or Jornaya certification, and named compliance officer (when distinct from the author) presents a coherent operational picture. That coherence is itself an E-E-A-T signal, and it is also a litigation defense element when paired with TrustedForm or Jornaya implementation. The same artifacts serve both audiences.

For sites still building the foundational layer, the LLMO lead generation guide covers how citation engineering interacts with author and entity infrastructure. The two layers reinforce each other; neither works in isolation.

The About-methodology-disclosure triad

E-E-A-T scrutiny in 2026 extends beyond the author entity into three site-level pages that function as a unit: About, methodology, and disclosure. Each carries a distinct verification function, and weakness in any of the three undermines the strength of the others.

About. The page that grounds the publishing organization in physical reality. Required elements include legal entity name, business address (real, not a virtual office that resolves to a UPS Store), formation jurisdiction, named leadership beyond the author, contact methods that work, and a substantive narrative of the site’s editorial mission. Optional but valuable: photos of leadership, office, or operations; founding-date timeline; revenue model transparency. The About page should mirror in HTML what the Organization schema asserts in JSON-LD. Mismatches between visible content and schema claims trigger downgrades in trust signal scoring.

Methodology. The page that explains how content gets produced, fact-checked, and updated. For lead generation, methodology pages typically cover: source-tier hierarchy (which categories of source are used and which avoided); fact-check process (who reviews what, against which standards); update cadence (how often older articles are reviewed); correction policy (what happens when errors are identified, with examples). The methodology page is where lead-gen sites can differentiate from generic content mills, because the methodology of a competent operator is materially different from the methodology of an outsourced content factory, and that difference is documentable.

Disclosure. The page that handles affiliate relationships, sponsored content, lead-routing economics, and any other commercial arrangement that affects content. For lead-gen sites, disclosure is unusually important because the entire revenue model often involves arrangements that readers cannot infer from article surface. A disclosure page should cover: lead-buyer relationships (with named partners where possible); affiliate network participation; advertorial conventions; and the rules under which commercial relationships affect coverage decisions. Strong disclosure pages reduce trust risk; weak or absent disclosure pages amplify it.

The triad functions as a cluster because verification systems read the three together. A site with a strong About page but no methodology page reads as legitimate but unaccountable. A site with strong methodology but weak disclosure reads as accountable but commercially opaque. A site with strong disclosure but a hollow About page reads as transparent about ads but unsubstantial as a publisher. The combination must be coherent.

For lead generation sites specifically, a fourth page often reinforces the triad: a compliance page covering TCPA, state mini-TCPA exposure, DNC scrubbing, and consent platforms used. This is not strictly E-E-A-T territory, but it functions as a trust signal in the same way and carries the same verification logic. The compliance page should match what the technology stack actually does – claiming TrustedForm integration without implementing it is a worse signal than not claiming it at all, because it produces a verifiable mismatch.

The 90-day E-E-A-T audit playbook

A disciplined 90-day program covers most of the work for a single-author lead-gen site. The structure below assumes a site with 100 to 500 articles, one to three primary authors, and existing schema infrastructure that has not been audited against 2026 standards. Larger sites extend the timeline by adding editorial-board entities and per-vertical sub-audits.

Days 1 to 30: Author entity and sameAs assembly. The first month rebuilds the foundation. Inventory existing author pages and bylines. For each author, compile credential evidence: certifications (with verifiable URLs), conference appearances, podcast guest history, published bylines on third-party sites, education, prior employment. Create or refresh LinkedIn profiles to match the canonical author bio. Register or update ORCID identifiers if applicable. Audit Wikipedia presence – most authors will not have one, which is fine; do not invent one. Compile the sameAs array in priority order. Update the author page HTML to display credentials prominently, then update Person schema to mirror the visible content. Validate using Schema.org’s validator and Google’s Rich Results Test.

Days 31 to 60: Triad pages and citation density. The second month addresses site-level trust infrastructure. Rebuild About, methodology, and disclosure pages as a coordinated set. Ensure each page references the others naturally and that content matches Organization schema. For lead generation, add or refresh the compliance page covering TCPA stack and consent platforms in use. Run a citation density audit across top 20 to 30 traffic-driving articles: do they cite primary sources with named, dated attribution? If not, add citations from the source-tier hierarchy in the methodology page. This is also the right window to update outdated articles, refresh dates legitimately (only when content actually changed substantively), and remove articles whose topic falls outside author expertise.

Days 61 to 90: Wikidata, byline rollout, and measurement. The third month moves to advanced verification and measurement. Create a Wikidata item for the publishing organization at minimum, and for the primary author when notability supports it. Wikidata items require external references; the methodology and About pages built in the prior month provide them. Roll out byline schema across all articles using @id references to the canonical Person entity rather than inline definitions. Set up measurement: AI Overview citation tracking via tools that monitor SERP features; Google Search Console queries for branded author searches; entity-level Search Console reports if available. Establish a quarterly review cadence so the audit becomes ongoing rather than one-time.

Three failure modes recur across operator implementations of this playbook. The first is sameAs dishonesty – adding URLs that do not actually describe the same entity, or that go to dormant profiles. Verification systems detect this and discount accordingly; honesty is the cheaper option. The second is methodology theatre – writing a methodology page that describes practices the site does not follow. The mismatch between claim and practice is detectable through content analysis and produces worse outcomes than no methodology page at all. The third is one-time-audit thinking. E-E-A-T is a maintenance problem rather than a project. Annual or quarterly review cycles outperform single overhauls.

For sites where the playbook is the start of a broader visibility transformation, the trust architecture analysis covers how E-E-A-T integrates with the larger AI search infrastructure operators must understand. The 90-day audit produces the foundation; the architecture builds on it.

The compounding nature of these signals is the reason the playbook works. Each verified link strengthens the next, each cited primary source reinforces the methodology page, each byline references the same maturing Person entity. Authority produced this way is durable in ways that purchased backlinks or AI-spun content can never match. The cost is mostly attention and discipline rather than money; the return is the ability to remain visible after AI Overviews and the December core updates have finished compressing the field.

Key Takeaways

- Google added Experience to E-A-T on December 15, 2022, and Trust sits at the center of the model with the explicit caveat that untrustworthy pages have low E-E-A-T regardless of other strengths.

- The Helpful Content System merged into core ranking in March 2024, distributing E-E-A-T-style heuristics across multiple core signals and turning helpfulness into a continuous per-page signal rather than a sitewide classifier output.

- The December 2025 broad core update extended E-E-A-T scrutiny beyond YMYL into lifestyle, SaaS, e-commerce, and reviews, narrowing the gap between regulated and unregulated content production.

- AI Overviews citation in 2026 weights heavily on entity confidence, which is computed by traversing sameAs chains to Wikidata, Wikipedia, LinkedIn, ORCID, and industry-specific registries; sites with thin chains lose citation share.

- Google’s March 2026 spam update was a SpamBrain refinement targeting scaled content abuse – sites publishing large volumes of AI-generated pages without editorial oversight – with affected domains reporting 50-80% traffic drops; the site-reputation-abuse policy introduced in March 2024 (and tightened November 2024) remains active alongside it. Both enforcement patterns penalize the same structural tell: an absent or unverifiable author entity, or content topics misaligned with the author’s documented expertise.

- A compliant Person entity carries name, jobTitle, image, description, worksFor, knowsAbout, hasCredential, and a sameAs array prioritized by Wikidata, Wikipedia, ORCID, LinkedIn, then industry-specific authoritative profiles.

- Lead-gen single-author sites concentrate E-E-A-T weight on one entity, which compounds advantage when the chain is clean and compounds penalty when it is not – author-vertical alignment is the load-bearing decision.

- The About-methodology-disclosure triad must read coherently together; weakness in any one page undermines the others, and lead-gen sites add a compliance page covering TCPA stack and consent platforms.

- A 90-day audit covers the foundational rebuild for most sites: month one for author entity and sameAs assembly, month two for triad pages and citation density, month three for Wikidata, byline schema rollout, and citation measurement.

- Author-entity verification is now the load-bearing E-E-A-T mechanism in 2026; rubric-era audits that ignore the entity layer produce work that no longer maps to how AI Overviews and core systems actually evaluate sites.

Sources

- E-A-T gets an extra E for Experience (Google Search Central Blog, December 2022)

- Search Quality Rater Guidelines (Google, latest)

- Creating Helpful, Reliable, People-First Content (Google for Developers)

- March 2024 Core Update and Helpful Content System integration analysis (Glenn Gabe / GSQi, 2024)

- Site reputation abuse policy (Google Search Central)

- Google March 2026 spam update analysis (PPC Land, 2026)

- Schema.org Person type reference

- Schema.org sameAs property reference

- Wikidata for SEO entity knowledge graph (Over The Top SEO)

- Author page examples for E-E-A-T (Digitaloft)