A 2026 field report on the post-bot-detection arms race: how human fraud farms operate, why the standard verification stack rubber-stamps their submissions, and the six signals that finally separate paid form-fillers from genuine consumer intent.

The Decade That Made Bots Easy and Humans Hard

For most of the 2010s, lead-fraud detection meant bot detection. Operators bolted CAPTCHA onto forms, layered honeypots, watched header strings, and treated suspicious user-agent patterns as proof of fraud. The arms race ran on terms favorable to defenders: bots scaled faster than detection, but each generation eventually fell to a fingerprint, a JavaScript challenge, or a behavioral tell that humans could not fake at scale.

That arms race ended quietly around 2022, when the economics of human fraud crossed bot economics in several geographies. Anura’s 2026 research describes the inflection point clearly: a worker in Bangladesh paid roughly $120 per year, working twelve-hour shifts, can produce form submissions a sophisticated bot cannot replicate. The worker uses real fingers on real keyboards. The mouse trails are organic. The CAPTCHA solves come from the same neurons that solve every other CAPTCHA. The TrustedForm certificate documents a textbook session.

By 2026, the human-farm category has become the highest-yield fraud vector in lead generation. HUMAN Security’s 2026 State of AI Traffic & Cyberthreat Benchmark Report, drawing on more than one quadrillion interactions across the Human Defense Platform, finds that automated traffic now grows eight times faster than human traffic — but also that human-driven fraud has scaled in parallel, often blended with AI-assisted automation in hybrid operations that defy the bot-versus-human framing entirely. Fraudlogix’s 2026 ad-fraud benchmark, built on 105.7 billion impressions analyzed across 2025, puts global invalid traffic at 20.64 percent of impressions — roughly one in five — with material variation by country and a growing share attributed to human and human-augmented fraud rather than pure bots.

For lead-generation operators, this analysis examines what the new fraud topology looks like, why the existing verification stack fails to detect it, the six signal classes that do detect it, where the major fraud-prevention vendors (Forter, Sift, Anura, IPQualityScore, LexisNexis ThreatMetrix, BehavioSec) draw their detection lines, how fraud signatures shift across the major lead-gen verticals, and a 30-day audit playbook that surfaces the leakage already in production. The thesis: bot detection alone has been a solved problem for two years, and operators still relying on it are not protecting their margins — they are subsidizing Manila and Dhaka.

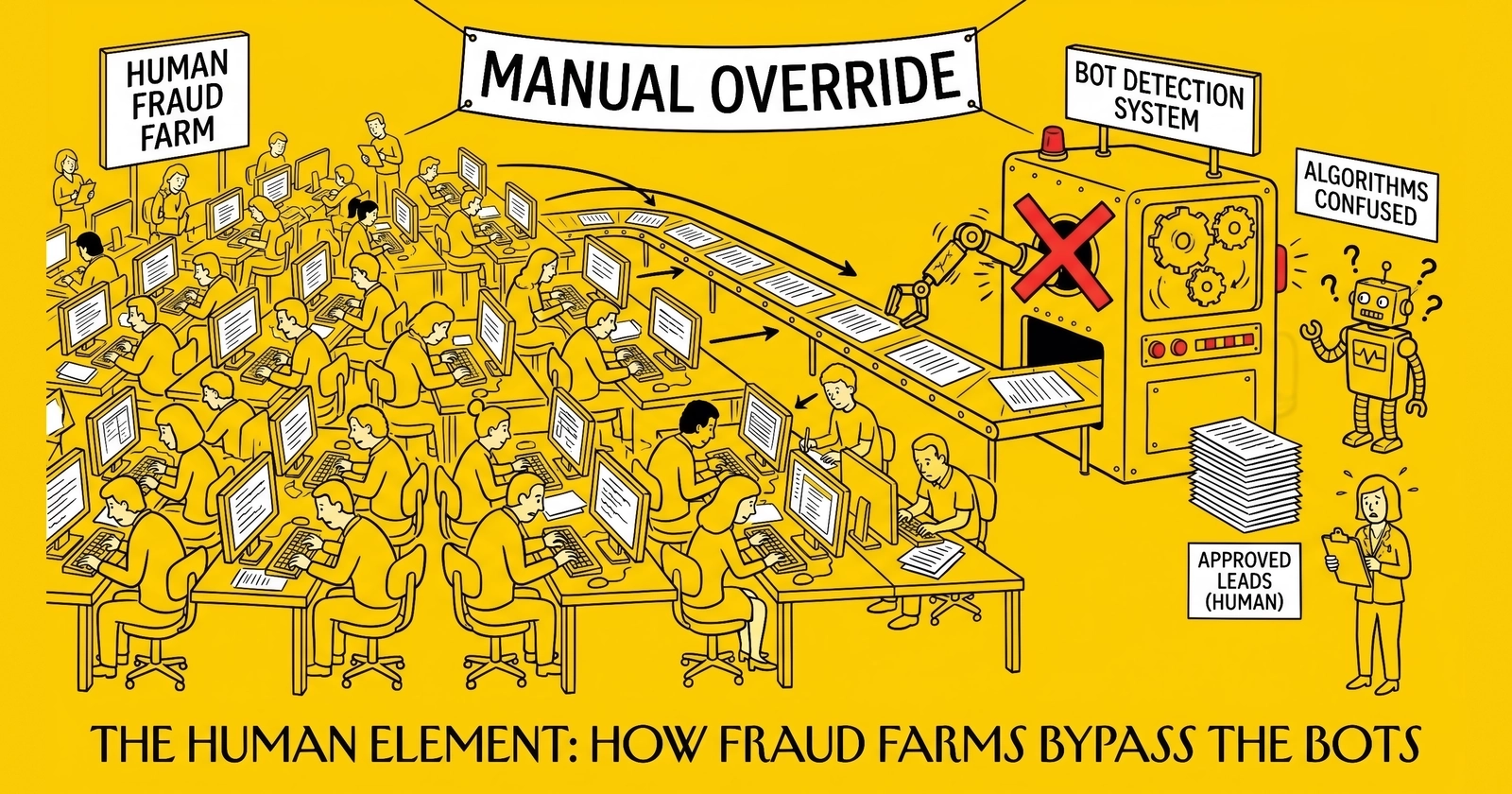

What a Human Fraud Farm Actually Looks Like

The image of fraud farms in Western reporting tends toward either dystopian (rooms of exhausted workers under fluorescent lights) or dismissive (a few teenagers running scripts in a basement). Both miss the operational reality of the 2026 industry, which has matured into a layered services market with specialization, tooling, and quality tiers.

Operations and Tiering

Three operational models dominate the category. The first, documented in Anura’s reporting, is the single-operator multi-device farm: one worker controlling between 50 and 400 devices through specialized software, alternating between them to simulate distributed traffic. These operations are common in Vietnam and the Philippines, where photographer Jack Latham documented them at length in his 2023 Beggar’s Honey project — rooms of phones strapped to wooden frames, each running an automated rotation of taps and clicks under partial human supervision.

The second model is the human-staffed call center reskinned for fraud: 100 to 1,000 workers in shift work, each handling form submissions or clicks at piece rates between $1 per 1,000 actions and $0.01 per action depending on task complexity. Reporting from The New Inquiry’s “Bengali Click Farmer” investigation and Garrett Bradley’s 2016 documentary Like on Dhaka click farms places average pay around $1 per 1,000 likes, with workers earning roughly $10 per day on ten-hour shifts and a wider compensation floor that can fall to roughly $120 per year for the lowest-tier operators. CHEQ’s research and Fraudlogix’s reporting confirm a similar wage range across the Philippines, Indonesia, Egypt, Sri Lanka, and Nepal.

The third model is the most operationally sophisticated: hybrid AI-human teams where automation handles the mechanical scale (loading forms, rotating proxies, generating filler data) and humans handle the parts that defeat bot detection (CAPTCHA solves, voice verification calls, occasional handwritten edits to make the submissions look organic). This model emerged in 2024 and now represents the highest-quality output — and the highest-margin work for farm operators, who charge premium per-action rates for AI-human hybrid leads that pass strict buyer verification.

Geography and Economics

The geographic distribution tracks labor cost and English-language ability. Documented hubs include the Philippines (English-fluent, large outsourcing infrastructure), Vietnam (lower wages, growing English fluency), Bangladesh (lowest documented wages, large unemployed-graduate population), Indonesia, Egypt, Sri Lanka, Nepal, and increasingly Venezuela for Spanish-language and Latin-American-targeted fraud. Fraudlogix’s country-level invalid-traffic data, while not a direct map of farm location, shows the downstream effect: Honduras at 67.52 percent IVT, well above any country in Western Europe, where the combined regional rate sits at 7.80 percent.

The economics for buyers of farm services are stark. A US insurance lead generator can buy 10,000 form submissions for between $300 and $1,500 from a tier-three farm — between three and fifteen cents per submission, against a downstream resale value of $25 to $80 per lead. Even a five-percent pass-through rate from farm submission to monetized lead produces 50:1 to 250:1 returns on the fraud investment, before any consideration of buyer chargeback recovery (which most contracts cap at 5 to 15 percent of monthly volume).

This is why the fraud category persists despite a decade of detection investment. The asymmetry between cost-of-fraud and value-of-clean-lead is too large for bot-detection-grade defenses to close. Operators who treat fraud as a margin issue rather than a moral one understand that the question is not whether fraud exists in their flows — it is what percentage, where, and at what cost-to-detect.

Why TrustedForm and Jornaya Pass Farm Submissions

The single most expensive misconception in the lead industry is that a TrustedForm or Jornaya certificate equals a clean lead. The certificate is not, and was never marketed as, a fraud indicator. It is a session-event recorder. When a real human on a real device fills out a real form, the certificate documents that fact — accurately, completely, and unhelpfully when the human is a paid form-filler in Manila with no purchase intent.

ActiveProspect’s own product documentation makes this distinction. Steve Rafferty, ActiveProspect president, addressed the limitation in 2024 commentary on the FCC’s TCPA consent rule: certificates verify the event, not the legitimacy of the underlying intent. This is a feature, not a bug — TrustedForm’s role in the stack is to document evidence for TCPA defense, not to score lead quality. The conflation happened in marketing copy, not in product design.

The mechanics of the failure mode are worth tracing in detail. A farm worker in the Philippines opens the lead form on a phone purchased in the Philippines, configured to a US residential proxy purchased from a specialist vendor. The worker types the form fields with normal human cadence — typing speed, pause patterns, even occasional backspace corrections that mimic genuine consumer behavior. The CAPTCHA solves cleanly. The form submission fires. TrustedForm captures the entire session: keystroke timing, mouse trail, time on page, scroll depth, focus events. Every signal reads human, because every signal is human. The certificate validates. The lead enters the buyer’s CRM with full TCPA documentation.

The buyer dials the phone number. It rings to a virtual number controlled by the farm operator, who routes it to either an IVR that captures voicemail or a hired call-handler ready to verify the lead in fluent English with a generic American accent. The call-handler reads from a script confirming the consumer’s interest in the relevant product. The lead converts to “qualified” status in the buyer’s CRM, gets billed at full rate, and disappears into the conversion-tracking lag where most attribution lives. Three weeks later, when nobody on the lead list ever buys, the buyer files for chargeback under the standard 30-day return window, recovers some fraction, and absorbs the rest as cost of business.

For a more detailed treatment of the TrustedForm and Jornaya limitations specifically, see TrustedForm vs Jornaya: complete comparison. For the broader lead-validation context, see real-time lead validation before purchase.

The strategic implication: certificates are necessary but insufficient. An operator running TrustedForm-only defense in 2026 is running 2018-era infrastructure. Adding fraud detection on top of certificate generation is the minimum modern stack.

Six Detection Signals That Catch Human Farms

The signals that catch human fraud farms exist precisely because the farms cannot perfectly replicate the statistical fingerprints of distributed consumer traffic. Each individual submission can be made to look human-organic. The aggregate behavior of a farm cannot. Detection happens in the seams between submissions — the patterns that emerge when 100 leads from one farm pass through a system in 24 hours.

The six signal classes below, drawn from Anura’s behavioral fingerprinting research, IPQualityScore’s threat-intel methodology, LexisNexis ThreatMetrix’s network analysis, and HUMAN Security’s bot-mitigation framework, define the modern detection stack.

Geo-Velocity Anomalies

A single device or browser fingerprint that submits leads claiming residence in geographically inconsistent locations within minutes — Texas at 09:14, Florida at 09:21, California at 09:34 — is almost certainly a farm. Legitimate consumers do not relocate across state lines on minute-level timescales. The signal becomes powerful when paired with claimed-state versus IP-state mismatch: a lead claiming a Florida ZIP code submitted from a Manila IP routed through a Texas residential proxy.

For solar leads specifically, this pattern intersects with property-ownership verification, which is treated in solar lead fraud and homeownership verification. The combination of geo-velocity flag plus property mismatch is one of the highest-confidence farm indicators in the solar vertical.

Device Clustering

Real consumer traffic produces a long tail of unique device fingerprints — each visitor on a different combination of operating system, browser version, screen resolution, installed fonts, GPU, audio stack, and a dozen other attributes. Farm traffic clusters: 50 form submissions from 50 fingerprints that share an unusually high number of attributes (same OS minor version, same screen resolution, same font set, same GPU driver) reveal that the underlying devices are sourced from a small batch of identical hardware purchased and configured uniformly. LexisNexis ThreatMetrix’s Digital Identity Network is particularly strong on this signal because it correlates device fingerprints across all client traffic, surfacing devices seen at one operator now appearing at another.

Behavioral Entropy

Real humans produce noisy mouse traces, variable typing cadence, irregular pause patterns, and idiosyncratic field-completion sequences. Farm workers produce statistical regularities: tighter distributions of time-on-field, more uniform mouse-trace shapes, fewer accidental focus changes, more consistent submit-button click positions within the button bounding box. None of these signals are conclusive on a single submission. Across 50 submissions from one farm, the entropy distribution diverges measurably from genuine traffic. Anura’s behavioral fingerprinting and BioCatch’s banking-grade keystroke dynamics both operate on this signal class, with BioCatch’s 16-billion-session corpus providing some of the deepest reference data in the industry.

Residential Proxy Detection

Network-level detection is the most-evolved signal class. Specialist providers — IPQualityScore, LexisNexis ThreatMetrix, Castle, SpyCloud — maintain honeypot networks and infiltrate proxy botnets to identify residential-proxy exit nodes in real time. IPQS reports detecting more than one billion threat events per day, with proprietary data on residential-proxy networks aggregated from infiltrated botnets and malware command-and-control servers. The detection rate has improved substantially between 2023 and 2026 as the category matured, but the cat-and-mouse nature of the problem means that any single vendor’s detection rate decays without continuous reinvestment in fresh intelligence.

Submit-Time Clustering

Genuine consumer traffic distributes across the day according to predictable patterns — concentrations during commute hours and lunch breaks, decline overnight, weekend patterns differ from weekday. Farm traffic clusters around shift-change times in the source country, producing anomalous concentrations of submissions at 02:00 to 04:00 Eastern Time (corresponding to the Manila or Dhaka morning shift). Operators can detect this signal cheaply with basic time-series analysis on submit timestamps grouped by source — a flag worth implementing even at low budgets.

Known Farm IP and ASN Ranges

The lowest-cost signal is also the lowest-precision: maintaining blacklists of ASN ranges and IP blocks associated with documented farm operations. Threat-intel feeds from HUMAN Security, Fraudlogix, IPQS, and others publish these regularly. The signal catches sloppy farms that have not yet rotated to fresh proxies. It misses sophisticated operators using high-quality residential proxies. It is best treated as a free first-pass filter, not a primary defense.

Signal Combination Logic

No single signal is sufficient. Two or more signals firing simultaneously on a single lead is a high-confidence flag in production environments — operators implementing this logic in 2025 and 2026 typically find that 5 to 15 percent of their lead volume falls into the multi-signal category, with conversion rates on flagged leads sitting at one-third or less of clean traffic. The economics of the rejection decision become straightforward at that conversion gap.

For a complementary treatment of bot-specific detection signals (which still have value as a layer beneath farm detection), see bot detection and CAPTCHA implementation.

| Detection Signal | Farm Behavior That Triggers It | Primary Vendor Coverage | Implementation Difficulty |

|---|---|---|---|

| Geo-velocity anomaly | Same fingerprint claiming multiple states in minutes | Forter, Sift, ThreatMetrix | Low |

| Device clustering | Multiple identities from near-identical hardware fingerprints | ThreatMetrix, Forter, Sift | Medium |

| Behavioral entropy | Tighter-than-organic distributions of typing, mouse, time-on-field | Anura, BioCatch, Sift | High |

| Residential-proxy detection | Submissions routed through infiltrated proxy networks | IPQS, ThreatMetrix, Castle | Medium |

| Submit-time clustering | Anomalous concentration around source-country shift hours | In-house analytics, Anura | Low |

| Known farm IP/ASN ranges | IPs from documented farm-associated address blocks | Threat-intel feeds (free or via vendor) | Low |

Source: Synthesized from Anura behavioral fingerprinting documentation (2026), LexisNexis ThreatMetrix product specifications, IPQualityScore threat-intel methodology, BioCatch behavioral biometrics solution brief (2025), and HUMAN Security 2026 benchmark report.

Vendor Coverage: Where the Detection Stack Draws Lines

The fraud-prevention vendor market in 2026 is segmented less by capability than by origin story. Each major platform was built for a specific adjacent problem — e-commerce chargebacks, banking account takeover, ad-network click validation — and brought its detection stack into lead generation as a secondary use case. Understanding the origin tells operators which signals each vendor handles natively versus which require integration work.

Forter emerged from e-commerce chargeback prevention. Its core competence is real-time decisioning on transactional patterns — the model trained on tens of billions of e-commerce sessions where the cost-of-error (issuing a chargeback) is well-understood. For lead-gen operators selling immediately monetized leads (calls, transfers, exclusive contracts), Forter’s transactional decisioning translates well. Its weakness is integration: lead-gen-specific data fields (TCPA consent flags, source-affiliate IDs, vertical metadata) require custom mapping that Forter’s e-commerce-default schema does not handle natively.

Sift holds the broadest installed base. G2’s Fall 2025 Reports placed Sift at the top of multiple fraud-detection categories, and Sift’s Global Data Network processed approximately one trillion events in 2025. The breadth is the differentiator: a fraud signature that surfaces on one Sift customer becomes detection signal for every other customer. For operators in high-volume verticals (auto insurance, home services), Sift’s network effect is meaningful. For low-volume verticals or geographies where Sift’s customer base is thinner, the network effect attenuates.

Anura is the most lead-gen-native of the major platforms. Built specifically for ad fraud and click farm detection, Anura’s behavioral fingerprinting, technical fingerprinting, network verification, and session mapping stack maps directly to the lead-gen fraud surface. Anura claims 99.999 percent accuracy on its detection script, with explicit zero-false-positive design (only flagging visitors when 100 percent confident they aren’t real). The trade-off: Anura’s signal coverage is narrower than Sift’s, focused on the ad-fraud and click-farm problem rather than the full identity-and-payment spectrum that Sift addresses.

LexisNexis ThreatMetrix is the device-intelligence and digital-identity layer. ThreatMetrix’s strength is the Digital Identity Network — a cross-customer view of device fingerprints, behavioral patterns, and identity reuse drawing on more than 78 billion data records and 1.4 billion tokenized digital identities, processing roughly 110 million authentication and trust decisions per day. For operators who need device clustering and identity-reuse detection, ThreatMetrix is the category leader. It is also the most expensive integration in the category, with seven-figure annual contracts the norm for enterprise customers.

IPQualityScore (IPQS) sits at the network-layer detection tier. Its proxy detection and IP intelligence are widely considered best-in-class for residential-proxy detection specifically, with proprietary data from infiltrated botnets and a honeypot network producing detection rates competitors struggle to match. For operators who need network-layer signals at lead-gen-friendly pricing, IPQS is often the default. It pairs naturally with TrustedForm or Jornaya certificates for a layered defense.

BehavioSec (now operating under LexisNexis Risk Solutions) and BioCatch are the behavioral-biometrics specialists. Both are dramatically over-specified for most lead-gen operators — built for banking fraud where false-negative cost runs in millions per incident. BehavioSec’s strength is Stockholm-bred keystroke and pointer analytics, integrated with the ThreatMetrix Digital Identity Network. BioCatch — acquired by Permira at a $1.3 billion valuation in May 2024 and now expanding via a strategic partnership announced with Nasdaq Verafin in September 2025 — analyzes more than 16 billion user sessions monthly and protects over 500 million digital banking customers. For a lead-gen operator running high-value verticals where a single bad lead exceeds $80 in cost, the behavioral-biometrics layer can be justified. For most operators, the behavioral signals bundled into Anura, Sift, or Forter cover the practical detection need.

Castle occupies a middle-market niche, particularly strong for SaaS account-takeover prevention but increasingly used in lead-gen as a lighter-weight ThreatMetrix alternative. SpyCloud focuses on credential exposure and account-takeover, less directly applicable to inbound lead fraud but relevant for operators running buyer-portal and CRM access controls.

The strategic question for an operator is not which vendor is best — it is which combination of vendors maps to the specific fraud surface the operator’s lead flows expose. A pure-affiliate aggregator running 50 sources with high churn needs different coverage than an exclusive solar lead generator running 5 owned-and-operated funnels. The vendor-selection logic flows from the fraud topology, not from market-share rankings. For a deeper treatment of vendor evaluation in lead generation broadly, see evaluating lead vendors questions before buying.

Vertical-Specific Fraud Signatures

Human fraud farms do not produce uniform output. The signatures shift by vertical because the buyer verification stacks shift by vertical, the lead values shift, and the qualifying questions farm operators must train their workers to handle shift. Treating “lead fraud” as a single category is the most common mistake operators make in detection design.

Insurance Verticals

Auto and home insurance leads command between $20 and $80 per lead at the affiliate-network tier, with exclusive-buyer pricing reaching $150 or more for premium ZIP codes. Farm operators target this vertical with high frequency because the buyer verification stack is predictable: phone validation, address validation, prior-policy check (for auto), homeownership check (for home). Farms have been training workers since 2022 to pass these checks, with three signature patterns dominating: fabricated-but-plausible vehicle details (year, make, model that exist but do not match the claimed VIN), homeownership claims at apartment-complex addresses, and “just-shopping” interest signals that score well in lead-buyer scoring models but predict zero conversion.

The insurance-fraud-pattern detail is treated at length in insurance lead fraud patterns and detection. The relevant point for the human-farm lens: insurance fraud detection has matured furthest, which means the farms targeting insurance are also the most sophisticated. Operators in this vertical face the highest detection bar.

Solar Verticals

Solar leads pay between $40 and $200 per lead, with exclusive premium leads in California, New York, and Massachusetts reaching $250 or more. The fraud surface here is dominated by two patterns: fabricated homeownership (the single most-common solar fraud category), and synthetic-identity layering where farm-generated leads are paired with stolen or fabricated address records to pass property-data checks (ATTOM, CoreLogic, Black Knight, DataTree). The 7,000 FTC solar-fraud complaints filed in 2025, while not all attributable to farms, indicate the volume of bad data flowing through the vertical.

Mortgage Verticals

Mortgage leads are higher-value ($75 to $300+) and harder for farms to fabricate convincingly because the qualifying data (current loan balance, property value, credit score range, refinance trigger event) is deeply personal and difficult to invent. Farm operations in mortgage tend toward two strategies: recycling stolen credit-application data (synthetic-identity territory rather than pure farm output), and producing low-effort “interest” leads that pass first-pass qualification but fail at income and employment verification. For the verification stack on mortgage specifically, see mortgage lead income and employment verification.

Legal Verticals

Legal leads, particularly mass-tort and personal-injury, command extreme prices ($150 to $1,500+ per qualified lead) and attract correspondingly sophisticated fraud. The signature here is different: less fabricated identity, more “manufactured incident” — leads where the claimed injury or legal event is genuine in form but manufactured or solicited through misleading ad creative. The farm’s role is less in direct identity fabrication and more in driving traffic to misleading funnels that produce technically-valid leads with no genuine legal claim.

| Vertical | Lead Value Range | Dominant Farm Signature | Hardest-to-Fake Verification | Vertical-Specific Detection Layer |

|---|---|---|---|---|

| Auto insurance | $20–$80 | Fabricated vehicle and policy data | VIN-to-make/model match, prior-policy check | Phone + carrier + plate verification |

| Home insurance | $25–$150 | Apartment-as-house ownership claims | Property-database ownership match | Address standardization + ownership API |

| Solar | $40–$250 | Fake homeownership at non-owned addresses | Property-database ownership match | ATTOM / CoreLogic / DataTree query |

| Mortgage refi | $75–$300 | Recycled or invented loan-balance data | Credit-pull-able SSN, employment check | Soft-pull credit + employment verification |

| Mass-tort legal | $150–$1,500 | Manufactured-incident leads from misleading funnels | Medical-record or court-filing match | Source-funnel forensic review |

| Medicare Advantage | $35–$100 | Off-AEP enrollment fraud, age fabrication | CMS plan-eligibility check | CMS API + age verification |

Source: Synthesized from LeadGen Economy vertical research, FTC solar-fraud complaint data (2025), and industry-network pricing benchmarks (2025–2026).

The implication for detection design: a single fraud-detection threshold tuned across all verticals will under-protect high-value verticals and over-reject low-value ones. Vertical-specific tuning, with detection rules that fire on vertical-specific signature patterns, is the modern practice.

The 30-Day Fraud Audit Playbook

Most operators have farm-fraud leakage they do not see, because the detection signals were never instrumented and the conversion data lag obscures the causal chain. A 30-day audit, run as a structured project with concrete deliverables, surfaces the leakage and produces the rule set to remediate it. The playbook below has been validated across multiple operators in 2025 and 2026 and produces consistent results.

Days 1 Through 7: Instrumentation

The first week is data-collection infrastructure. The deliverable is a lead-ingest pipeline that captures the following on every submission, in addition to whatever frontmatter the operator already collects: device fingerprint (preferably from a vendor SDK such as Fingerprint, ThreatMetrix, or Anura), IP address and ASN, residential-proxy score from at least one specialist provider (IPQS or equivalent), mouse-trace summary statistics (total length, average speed, pause count), time-on-page, time-on-field for each form field, submit timestamp at millisecond precision, and source-affiliate ID with sub-source granularity.

The instrumentation should not block lead intake — leads that fail to capture the new signals should still flow, with null values logged. The goal in week one is data, not enforcement. Most operators discover during this phase that their existing form-capture pipeline lacks even basic fingerprinting; the build-or-buy decision is the first deliverable.

Days 8 Through 21: Backtest

The second and third weeks run the new signal set against the prior 90 days of leads, joined to conversion outcomes. The deliverable is a flagged-lead report grouped by source-affiliate, showing for each source the percentage of leads firing two or more anomaly signals, the conversion rate on flagged versus clean leads, and the dollar impact of the flagged segment. For operators running this audit consistently, 5 to 15 percent of total lead volume falls into the multi-signal flagged category, with conversion rates 50 to 70 percent below clean traffic. The dollar impact is straightforward to compute: flagged-lead volume times average lead cost minus flagged-lead conversion value equals the leakage.

The backtest also produces source-level differentiation. A 20-source lead operation typically finds that two or three sources account for 60 to 80 percent of flagged volume — the actionable signal. The remaining sources may need closer examination (the signal density is lower) or may be operating cleanly.

Days 22 Through 30: Rule Set and Remediation

The final week converts findings into operational changes. Three concrete deliverables: (1) hard-rejection rules for the worst signal combinations (typically a residential-proxy hit plus device clustering plus geo-velocity anomaly is a hard reject in production), (2) routing rules for ambiguous flagged leads (manual review queue, additional verification step, or buyer-warning flag), and (3) source-level scorecards that track flagged-lead percentage by affiliate and trigger contract review when thresholds are exceeded.

The remediation step also includes the conversation with affected affiliates. Operators who run this audit often find that their largest source by volume is also their largest source of farm-flagged leads — a relationship that requires renegotiation, not termination. The data from the audit is the leverage; affiliates rarely have visibility into the same signal set and respond to data they cannot dispute.

For operators considering whether to build this in-house or buy a turnkey solution, the build-or-buy logic in build vs buy lead management software applies in modified form: the instrumentation layer is build (or rent from a fingerprinting vendor), the rule logic is build, the threat-intel feed is buy. Pure-build solutions for fraud detection are uneconomic for any operator below roughly 500,000 leads per month.

What Comes Next: AI-Assisted Farms and the Voice Layer

The fraud-farm category is not stable. Two developments in 2024 and 2025 are reshaping the threat surface in ways that detection vendors are still adjusting to.

The first is AI-assisted hybrid operations. Farms that used to require a human for every CAPTCHA solve and every form field can now offload portions of the work to AI systems trained on consumer behavior datasets. The hybrid output looks more organic than pure-human farming (the AI variation introduces additional entropy) and runs at lower per-action cost. HUMAN Security’s research on the BADBOX 2.0 botnet, comprising one million Android devices and uncovered in 2025, illustrates the scale of hybrid operations: pure bots and pure humans both at the same scale produce different signatures, but a hybrid operation blends them in ways that defeat single-signal detection.

The second is voice cloning. Until 2024, farm operators could not handle outbound buyer-verification calls credibly — accents, language, and prosody gave them away within thirty seconds. Voice-cloning technology has crossed what researchers are calling the “indistinguishable threshold” in 2025 and 2026, with Group-IB documenting average per-incident deepfake-fraud losses of $600,000 and more than 10 percent of incidents exceeding $1 million. CX Today’s March 2026 reporting on the voice trust collapse describes the operational reality for contact centers: a farm worker can now use real-time voice modulation to handle a verification call in any accent, any gender, any age range.

For lead buyers running voice-verification programs as part of their lead-quality stack, this is a category-redefining problem. The accent-pattern checks that used to catch Manila-based callers claiming Texas residence no longer work. The remediation is harder: voice-verification vendors are scrambling to build deepfake-detection models, but the quality of generative voice models is improving faster than detection.

The longer-term implication is that detection cannot stand still. Operators who built strong farm-detection stacks in 2024 will find them eroding through 2026 and 2027 as AI augmentation propagates. The strategic posture for serious operators is continuous reinvestment in detection — not a one-time stack build, but an ongoing operating expense that scales with lead volume and competitor sophistication. The economics still favor the defender (a good detection stack costs cents per lead against fraud losses of dollars per lead), but the margin is narrower than it was three years ago and will narrow further.

For operators considering the broader question of how fraud detection fits into the lead-quality operating system, see lead fraud detection and prevention guide and lead validation across phone, email, and address.

Key Takeaways

-

Bot detection is a solved problem; human-farm detection is not. Operators relying on CAPTCHA, JavaScript challenges, and TrustedForm certificates as their primary fraud defense are running 2018-era infrastructure against 2026-era fraud. The certificate documents the event accurately — and that is precisely why farms pass through it.

-

Six signal classes catch what bot tools miss. Geo-velocity anomalies, device clustering, behavioral entropy, residential-proxy detection, submit-time clustering, and known farm IP/ASN ranges are the modern stack. No single signal is sufficient; two or more firing together on a single lead is the high-confidence flag.

-

Vendor selection is fraud-topology-dependent, not market-share-dependent. Forter excels at transactional decisioning, Sift at network-effect breadth, Anura at lead-gen-native fraud signals, ThreatMetrix at device intelligence, IPQS at residential-proxy detection. The right combination depends on the specific fraud surface a particular operator’s lead flows expose.

-

Vertical signatures matter more than horizontal averages. Insurance, solar, mortgage, and legal each face distinct farm-fraud patterns. A single detection threshold tuned across all verticals will under-protect high-value verticals and over-reject low-value ones. Vertical-specific tuning is modern practice.

-

The 30-day audit produces actionable data within four weeks. Instrument the lead intake, backtest 90 days of leads against the new signal set, build rule sets from the findings. Operators consistently find 5 to 15 percent of lead volume firing multi-signal flags, with conversion rates 50 to 70 percent below clean traffic — making the rejection economics straightforward.

-

AI-augmented farms and voice cloning are reshaping the surface. The 2026 detection stack will not hold against 2027 farm operations without continuous reinvestment. Voice-verification programs in particular are facing a category-redefining problem as deepfake voice tools cross the indistinguishability threshold.

-

Behavioral biometrics is over-engineered for most operators. BioCatch and BehavioSec were built for banking fraud where false-negative cost runs in millions per incident. For lead-gen operators below 50,000 leads per month, the behavioral signals bundled into Anura, Sift, or Forter cover the practical detection need at a fraction of the cost.

-

Detection is a margin issue, not a moral one. Fraud at 5 to 15 percent of lead volume against typical lead-gen margins of 20 to 35 percent means farm leakage consumes one-quarter to two-thirds of the operating margin on a typical lead operation. Operators who treat fraud as a margin issue act on it; operators who treat it as a moral issue tend not to.

Sources

-

Anura, “How Human Fraud Farms Work and How To Stop Click Fraud,” Anura.io blog, 2026

-

HUMAN Security, “2026 State of AI Traffic & Cyberthreat Benchmark Report,” HUMAN Security Research, 2026

-

Fraudlogix, “Ad Fraud Statistics 2026: 20.64% IVT Rate,” Fraudlogix.com, 2026

-

Fraudlogix, “Ad Fraud Rates By Country And Region (2025 Data),” Fraudlogix.com, 2025

-

HUMAN Security, “Understanding Click Fraud Tactics: Advanced Bots, Click Farms, and Mobile Fraud,” HUMAN Security blog, 2025

-

Group-IB, “The Voice of Fraud: Deepfake Vishing and the New Age of Social Engineering,” Group-IB Research Hub, 2025

-

CX Today, “Deepfake Voice Fraud is Fueling the Voice Trust Collapse, Are You Ready?” CX Today, March 2026

-

LexisNexis Risk Solutions, “ThreatMetrix Digital Identity Network — Product Documentation,” LexisNexis, 2025

-

IPQualityScore, “Proxy Detection API and Lead Validation Documentation,” IPQS, 2025

-

BioCatch, “Advanced Behavioral Biometrics — Solution Brief,” BioCatch, 2025

-

ActiveProspect, “TrustedForm and FCC TCPA Consent Recordkeeping — Steve Rafferty Commentary,” TCPAWorld, February 2024

-

The New Inquiry, “The Bengali Click Farmer,” https://thenewinquiry.com/the-bengali-click-farmer/ — primary reporting on Dhaka click-farm worker compensation that supersedes the Wikipedia summary

-

Garrett Bradley, Like (2016 documentary on Bangladesh click farms) — referenced via Common Ground Research Networks coverage, https://cgnetworks.org/news/the-bengali-click-farmer

-

CNN Style, “Photographer steps inside Vietnam’s shadowy ‘click farms’ (Jack Latham, Beggar’s Honey),” https://www.cnn.com/style/vietnam-farms-jack-latham-beggars-honey

Frequently Asked Questions

What is a human fraud farm?

A human fraud farm is a coordinated operation, typically located in low-wage labor markets such as the Philippines, Vietnam, Bangladesh, or Venezuela, where dozens to thousands of workers manually fill out lead forms, click ads, or perform engagement tasks for cents per action. Because the activity uses real humans on real devices, traditional bot detection signals such as CAPTCHA, JavaScript challenges, and header anomalies all pass cleanly. Anura’s research describes operations where a single worker uses hundreds of devices simultaneously, while larger farms pack hundreds of low-paid workers into rooms running long shifts. Hourly compensation can fall below one dollar per hour, with some Bangladesh-based workers reportedly earning roughly 120 dollars per year doing this work full-time.

Why does TrustedForm or Jornaya not catch human fraud farms?

TrustedForm and Jornaya certificates document what happened during the form session — they are not fraud-detection systems. When a real human on a real device completes a real form with real keystroke patterns and real consent language, both platforms certify exactly that. The certificate is technically accurate. The lead is still fraudulent because the human had no purchase intent, the contact information may be fabricated or recycled, and the consent was coerced by piece-rate compensation rather than commercial interest. ActiveProspect itself notes that certificates verify the event, not the legitimacy of the underlying intent — buyers must layer additional verification on top.

Which detection signals actually catch human fraud farms?

Six signal classes catch what bot tools miss: geo-velocity anomalies (one user submitting from multiple jurisdictions in minutes), device clustering (the same hardware fingerprint producing multiple identities), behavioral entropy (mouse-trace patterns, time-on-field distributions, typing cadence), residential-proxy detection (IP intelligence layered on ASN and routing data), submit-time clustering (anomalous concentration of submissions at identical timestamps), and known-farm IP and ASN ranges from threat-intel feeds. None of these signals individually proves fraud, but two or more firing together on a single lead is a high-confidence flag. The combined approach is what Anura, Forter, Sift, and LexisNexis ThreatMetrix sell.

How does Forter compare to Sift for lead fraud detection?

Forter and Sift solve adjacent problems. Forter’s strength is real-time decisioning on transactional data — its model was trained on chargeback data from e-commerce, which translates well to lead generation when leads are immediately monetized. Sift’s strength is breadth: it operates a global data network that processed roughly one trillion events in 2025, giving it strong cross-customer signal on identity reuse and known-bad behaviors. Sift led G2’s Fall 2025 fraud-detection rankings, while Forter held a 4.5-star Gartner Peer Insights rating versus Sift’s 3.9. For lead-gen operators specifically, neither platform was purpose-built for the vertical — both require integration work to map detection outputs into ping-post or post-only lead flows.

Are click farms still concentrated in specific countries?

Yes. Fraudlogix’s 2026 ad-fraud report, analyzing 105.7 billion impressions across 2025, found invalid traffic rates ranging from 0.85 percent (Belgium) to 67.52 percent (Honduras), with Western Europe holding the cleanest aggregate at 7.8 percent and the United States at 23.69 percent. Documented human fraud farms cluster in the Philippines, Vietnam, Bangladesh, China, Indonesia, Egypt, Sri Lanka, Nepal, and Venezuela. Sophisticated operators route this traffic through residential proxies in the destination country to mask the origin, which is why country-of-IP filtering alone is no longer a reliable signal — operators must combine ASN reputation, proxy detection, and behavioral signals to catch farms running through US residential IPs.

What is the annual financial loss from human fraud farms in lead generation?

Estimates vary because the category overlaps with broader ad fraud and synthetic-identity fraud. An industry estimate, not a primary-sourced figure, places human-farm and bot-mixed lead fraud at roughly 1.3 to 2.0 billion dollars in annual losses across the U.S. insurance lead market alone — a vertical where transaction value sits between 5.2 and 6.8 billion dollars. Juniper Research projected global digital ad fraud losses at 84 billion dollars in 2023, scaling toward 172 billion by 2028, of which a growing share is attributable to human-driven fraud as bot detection has improved. Fraudlogix attributes roughly 37 billion dollars in U.S. programmatic ad spend to invalid traffic annually.

Why are deepfake voice tools changing the fraud-farm threat model?

Until 2024, human fraud farms were limited to written form submissions and clicks. Voice cloning has changed that. Group-IB’s 2025 research documented average per-incident deepfake-fraud losses of approximately 600,000 dollars, with more than 10 percent of incidents exceeding 1 million dollars. CX Today reported in March 2026 on the broader voice-trust collapse facing contact centers. For lead buyers running call-verification programs, this matters: a farm worker can now use AI voice cloning to handle the qualifying call in a different language, accent, or gender than their actual voice, which defeats accent-pattern checks that previously caught Manila-based callers claiming to be Texas homeowners.

What does a 30-day fraud-farm audit look like?

A 30-day audit runs in three phases. Days 1 through 7: instrument the lead intake to capture device fingerprint, IP and ASN, residential-proxy score, mouse-trace summary, time-on-page, and submit-timestamp on every lead. Days 8 through 21: backtest the prior 90 days of leads against the new signal set, flagging any lead with two or more anomalies and tracking conversion outcomes. Days 22 through 30: build automated rules that hard-reject the worst signal combinations and route ambiguous cases to manual review. Operators running this audit consistently find that 5 to 15 percent of leads from at least one source are flagged, with conversion rates on flagged leads sitting one-third or lower than clean traffic — making the rejection economics straightforward.

Do residential proxies make farm detection impossible?

Residential proxies make IP-based filtering insufficient, not detection impossible. Specialist providers — IPQualityScore, LexisNexis ThreatMetrix, Castle, and SpyCloud — maintain honeypot networks and infiltrate proxy botnets to maintain blacklists of known residential-proxy exit nodes. IPQS reports detecting more than one billion threat events per day. The detection rate on residential proxies has improved markedly between 2023 and 2026 as the category matured, but the arms race continues: proxy providers rotate exits aggressively, and detection vendors must reinvest continuously in fresh intelligence. Treating proxy detection as a layer rather than a solution is the only sustainable posture.

Should small operators pay for behavioral biometrics?

For most operators below roughly 50,000 leads per month, dedicated behavioral biometrics from BioCatch, BehavioSec (now LexisNexis), or comparable platforms are over-engineered. The platforms were built for banking fraud where the unit economics support seven-figure annual contracts. Small lead-gen operators get most of the practical benefit from a tier-one fraud detection platform such as Anura, Sift, or IPQS that bundles lighter behavioral signals with IP and device intelligence at lead-gen-friendly pricing. The decision threshold flips when an operator runs high-value verticals — exclusive solar leads, Medicare Advantage, mortgage refinance — where a single bad lead costs 80 dollars or more in chargeback and the volume justifies the integration.

The shift from bot-fraud to human-farm-fraud is not a temporary anomaly; it is the new equilibrium of the lead-fraud market. Detection stacks built for the 2018 threat surface fail predictably against 2026 operations, and the gap is widening as AI augmentation and voice cloning enter the farm operator’s toolkit. The operators who will preserve their margins through 2027 and beyond are the ones treating fraud detection as a continuous operating expense rather than a one-time infrastructure build, instrumenting their lead flows for the six-signal stack, and tuning rule sets to the vertical-specific signatures their fraud surfaces actually expose. Everyone else is paying Manila and Dhaka — quietly, in chargeback timing lag, and in the slow erosion of buyer trust that follows.