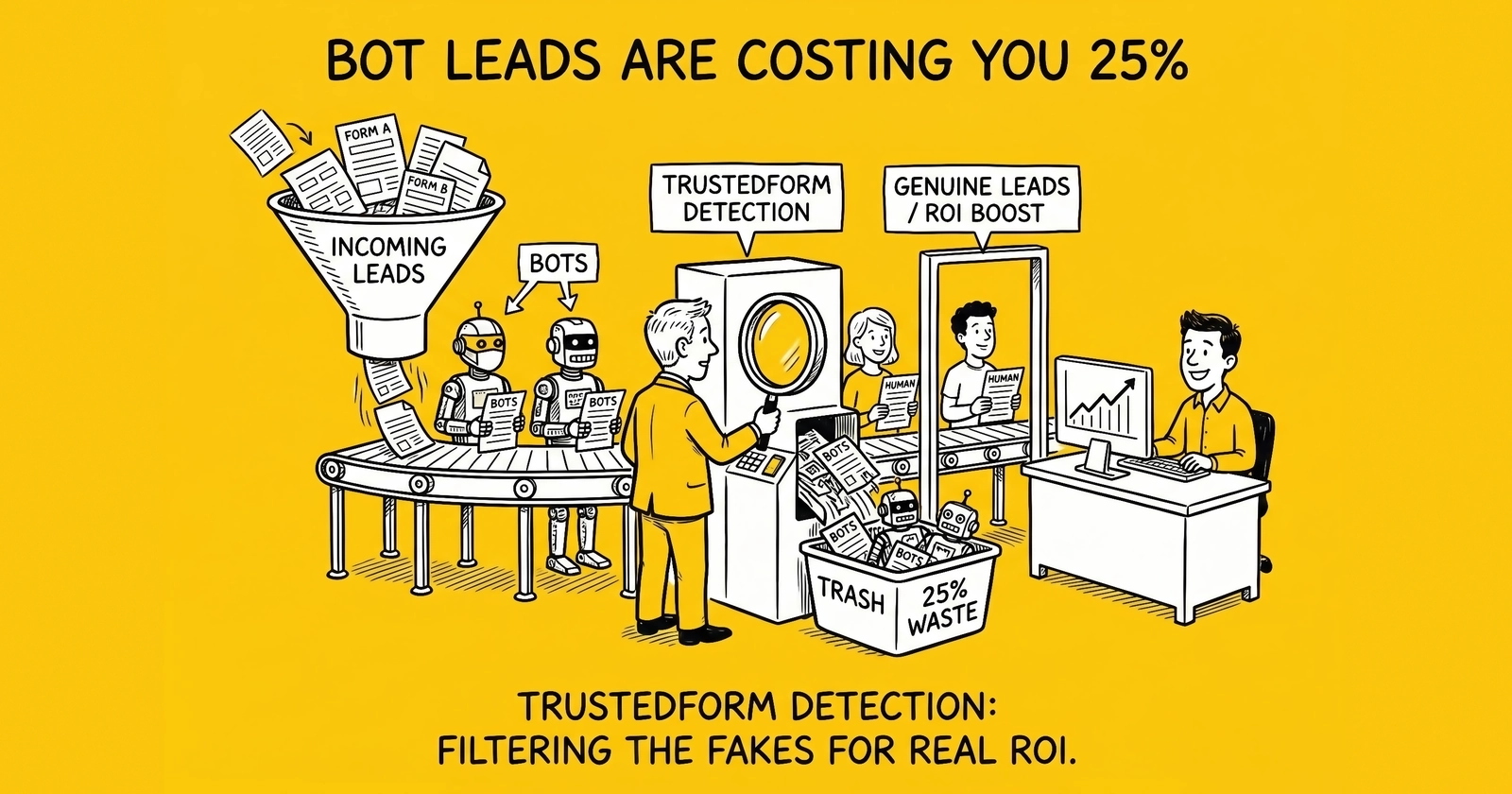

A 25% bot rate in lead form traffic and a December 2025 TrustedForm product launch turn fabricated consent from a theoretical TCPA risk into a measurable line on the operator P&L.

The 25% Number That Buyers Can No Longer Ignore

Anura’s 2025 Global Ad Fraud Report, published May 20, 2025, made the figure unavoidable. According to the report, 25% of all lead generation traffic is fake — submitted by bots, click farms, or fraud rings posing as real users. Anura projects global digital ad fraud losses on track to exceed $200 billion by 2028. Lead generation, with its high cost per acquisition and direct compliance exposure, sits inside that projection at higher concentration than display or social.

The number lands inside an industry already paying close to peak rates. Fraudlogix’s 2026 ad fraud baseline puts overall invalid traffic at 20.64% across 105.7 billion impressions analyzed in 2025, with desktop running at 27.03% and the United States posting a 23.69% IVT rate against 7.80% in Europe. Browser-level distribution shows Chrome at 21.75% and legacy environments far higher — Internet Explorer at 79.50% and Windows 8 at 76.26%. The structural condition behind the 25% lead-form bot rate is not a vertical anomaly. It is the digital advertising baseline, concentrated through paid acquisition channels that funnel directly into form submissions.

ActiveProspect responded with product. On December 2, 2025, the company launched TrustedForm Bot Detection — a certificate-level analysis layer that flags non-human form sessions before the lead reaches the buyer’s CRM. By the launch announcement, ActiveProspect was flagging more than 150,000 leads per week as bots across monitored campaigns. The company quantified the downstream cost at over $1.4 billion in annual revenue lost across its customer base to bot-generated leads. Two months later, in the February 3, 2026 “Designing a better lead ecosystem for 2026” post, ActiveProspect framed bot detection as the year’s central lead-quality investment, alongside the January 2026 acquisition of Verisk Marketing Solutions — which folded Jornaya and Infutor into the same platform that already operates TrustedForm.

The 25% figure forces a question buyers had been able to defer while affiliate networks carried the optimization narrative. If one in four leads is non-human, what fraction of the marketing budget is funding nothing, what fraction of the CRM capacity is processing fictional consumers, and what fraction of the call center is dialing certificates that document no consent at all?

Why Bot Certificates Are Worse Than No Certificates

The TCPA framework rests on consent. Under 47 U.S.C. § 227 and the FCC’s implementing rules in 47 CFR 64.1200, a regulated call or text requires prior express written consent from the consumer the message reaches. The TrustedForm certificate is the industry’s standard evidence layer: a third-party-witnessed record of the form session, the consent language displayed, the keystrokes captured, and the submission timestamp. ActiveProspect data cited across its TCPA defense materials shows leads with certificates have meaningfully fewer successful TCPA disputes than uncertified leads — the certificate is the buyer’s most-cited defense artifact.

Bot leads break the underlying logic. A certificate captures interaction, not authorization. When the interaction is generated by automation — a script, a headless browser, a CAPTCHA-bypass service — the certificate documents the bot’s keystrokes, the bot’s timestamp, and the consent language the bot’s environment rendered. There was no consenting party. The downstream call to the phone number on the form is, legally, a call without consent.

ActiveProspect’s framing in the December 2025 launch announcement is direct: “Stopping bots after they reach your CRM isn’t good enough.” The implicit acknowledgment is that the TrustedForm certificate, on its own, does not distinguish bot from human. A buyer who treats the certificate as proof of consent without behavioral verification is treating fabricated consent as legitimate consent — and inheriting the same TCPA exposure they would have faced with no certificate at all.

The exposure math is harsh. TCPA statutory damages start at $500 per violation under negligence and triple to $1,500 per willful violation. The average TCPA class action settlement of $6.6 million reflects the multiplier effect of class certification across thousands of calls. WebRecon counted approximately 3,200 TCPA cases filed in 2025, with roughly 80% pursued as class actions. Defense costs run $40,000 to $750,000 even when defendants prevail. A bot-driven lead campaign that places 50,000 calls before detection produces theoretical statutory exposure of $25 million at the negligence rate alone — a number that does not move based on whether the buyer holds certificates, because the certificates document interactions that never had a consenting human attached to them.

Fabricated consent is the worst of both states. The buyer paid the certificate fee, paid the lead price, paid the dialer cost, and now carries the same TCPA exposure they would have faced without any compliance infrastructure. The defense narrative inverts: prosecutors weaponize the certificate, arguing that the buyer had explicit visibility into form session metadata showing non-human behavior and proceeded anyway. The certificate becomes evidence of negligent process rather than proof of authorization.

The legal precedent is still maturing. Plaintiff firms have begun citing form-session metadata — completion times under 200 milliseconds, missing mouse coordinates, headless-browser user agents — to allege buyers should have known the consent was non-human. The argument tracks how the FCC’s one-to-one consent rule reframed defense expectations: knowing what consent looked like at submission time is now part of the buyer’s affirmative duty. A TrustedForm certificate that captures bot-pattern metadata and is acted upon anyway sits poorly inside that duty framework.

Six Bot Detection Signals That Actually Discriminate

ActiveProspect’s bot detection technology, surfaced through TrustedForm Insights, operates on six categories of signal that have emerged as the industry’s working taxonomy. None of them is novel in isolation; the discrimination value comes from aggregating them at the certificate level rather than the network or device level.

Time-on-form distribution. Human form completion follows a recognizable distribution — most legitimate insurance quote forms see median completion times in the 45 to 180 second range, with a long tail for users who pause to look up policy numbers or VIN data. Bot completions cluster at the extremes: sub-3-second submissions for naive scripts, or unnaturally uniform 30-second submissions for sophisticated automation that paces itself to look human. The signal is the distribution shape, not any single time. A feed whose time-on-form histogram shows two narrow peaks rather than a single broad distribution is showing two populations: humans and bots.

IP velocity. Legitimate consumer traffic from a residential ISP rarely produces multiple form submissions per minute. Coordinated bot traffic, particularly from data center IPs and compromised residential proxies, shows velocity spikes — 100 leads from the same /24 subnet in seconds, or repeated submissions from the same IP across different consumer identities. Fraudlogix’s 2026 baseline ranks data center traffic and VPN abuse among the top fraud sources. ActiveProspect’s certificate-level analysis layers IP velocity against time-of-day and source attribution to flag the volumetric anomaly without false-positiving genuine household traffic.

Device clustering. Device fingerprinting combines browser type, OS, screen resolution, plugin set, and font rendering signatures into an identifier that persists across sessions. Bot operations frequently reuse device fingerprints across thousands of submissions because spoofing every signal independently is expensive. A feed where 5,000 leads share fewer than 50 unique device fingerprints is showing a fraud farm. Fraudlogix’s 2026 statistics rank Linux at 41.78% IVT and Windows at 30.55% — Linux’s elevation reflects automation infrastructure rather than consumer use.

Behavioral entropy. Form interaction generates measurable entropy — variability in mouse trajectory, scroll velocity, typing cadence, focus events, and inter-keystroke intervals. ActiveProspect publishes specific metrics it analyzes inside the TrustedForm certificate: form_input_kpm (keys per minute), form_input_wpm (words per minute), and form_input_method (whether input was typed, pasted, or programmatically inserted). Human entropy is high and noisy; bot entropy collapses toward uniform values or absent entirely. A submission with form_input_method = “programmatic” and zero mouse movement is a bot. A submission with form_input_kpm at exactly 240 across every field is a sophisticated bot pacing itself.

CAPTCHA bypass patterns. Modern CAPTCHA-solving services price solves at well under one cent — published rates from major bypass providers commonly cluster around $0.001 to $0.003 per solve for reCAPTCHA v2 image challenges. CAPTCHA presence does not stop bot traffic; it taxes it. The signal value of CAPTCHA is the metadata it produces: solve time, solve provider fingerprint, and solve session entropy. Legitimate human solves take 8 to 30 seconds; CAPTCHA-bypass solves often take 1 to 4 seconds because the service runs the challenge in parallel with the bot’s form fill. A feed where CAPTCHA solve times cluster at the low end is producing bot traffic that has been laundered through a solving service. ActiveProspect’s bot-detection signal layer treats CAPTCHA as a data source, not a gatekeeper.

Headless-browser and execution-environment fingerprints. Headless Chrome, Puppeteer, Playwright, and Selenium leak detectable signals — missing or modified navigator.webdriver properties, absent peripheral hardware, automation-framework user agents, JavaScript execution traces that diverge from real browsers. Even sophisticated bot operators using stealth plugins leave residual fingerprints in the certificate-level metadata. ActiveProspect’s bot-detection technology explicitly evaluates “execution environment checks” for automation frameworks and headless browsers as part of its certificate analysis.

The signal taxonomy below maps each indicator to the mitigation layer where it can be enforced. Most operators run two or three of these in production; the discrimination value scales near-linearly with the number of independent signals stacked, because bots that defeat one signal frequently fail another.

| Signal | Indicator pattern | Best mitigation layer |

|---|---|---|

| Time-on-form distribution | Bimodal histogram; sub-3s or rigid uniform completion | Pre-submit JavaScript, post-submit certificate analysis |

| IP velocity | >5 submissions/minute from single /24; data center IP ranges | Edge WAF, certificate-level rate analysis |

| Device clustering | <50 unique fingerprints across 5,000 submissions; reused canvas hashes | Certificate-level fingerprint aggregation |

| Behavioral entropy | form_input_method = programmatic; uniform KPM; missing mouse trajectory | TrustedForm Insights certificate analysis |

| CAPTCHA bypass | <4-second solve times; solver-fingerprint correlation across submissions | CAPTCHA-as-signal, not gate |

| Execution environment | Headless markers; automation-framework user agents; navigator.webdriver leakage | Certificate-level browser audit |

The taxonomy is not exhaustive — fraud-farm operators using real devices defeat several signals simultaneously, and that escalation is the subject of the human-fraud-farm threat that detection vendors are openly preparing for as the next layer. The current signal set discriminates reliably against automated bot traffic, which is where the 25% baseline lives.

TrustedForm Bot Detection — What It Actually Does and What It Costs

ActiveProspect’s December 2, 2025 launch of TrustedForm Bot Detection turned the bot signal from a buy-side speculation into a vendor-managed certificate field. The product sits inside TrustedForm Insights, the analytics layer ActiveProspect uses to expose 22 proprietary data points per certificate to lead buyers. Bot Detection adds a flag — and the certificate-level metadata that supports the flag — to every TrustedForm certificate the buyer receives.

The mechanism is contextual analysis at the certificate level rather than IP reputation lookup at the network level. Where competing tools rely primarily on IP reputation, user-agent strings, or downstream phone validation, TrustedForm Bot Detection operates against the form-session metadata it already collects: keystroke timing, mouse and scroll patterns, focus events, execution-environment checks, and behavioral metrics including form_input_kpm, form_input_wpm, and form_input_method. Because the analysis runs against data ActiveProspect already certifies, it produces a flag tied to a specific certificate ID — a discrete artifact the buyer can route on, reject on, or quarantine for review.

ActiveProspect’s launch documentation specifies that suspected bot leads can be “flagged, filtered, rejected, or routed for review” before reaching sales or automation pipelines. The integration runs on top of the existing TrustedForm JavaScript install and certificate-claim API, which means buyers already running TrustedForm in production can adopt Bot Detection without re-instrumenting their forms. New buyers face the standard TrustedForm rollout — JavaScript install, hidden field configuration, server-side claim API, retention configuration aligned with TCPA’s four-year statute of limitations.

Pricing transparency is incomplete. As of April 2026, ActiveProspect has not published a per-certificate price for the Bot Detection add-on. The base TrustedForm certificate retention model is volume-based — buyers pay per certificate retained per month, with session-replay tiers commonly quoted in the $0.15 to $0.50 range depending on volume commitment. Bot Detection is sold as an upgrade inside TrustedForm Insights rather than a standalone product, with pricing negotiated against monthly certificate volume. Operators evaluating the add-on should request a quote that specifies their current monthly certificate count, target retention period, and the proportion of certificates they expect to bot-flag.

Two structural points matter for the ROI calculation. First, Bot Detection runs against certificates already being purchased, which means the incremental cost is an upgrade fee, not a new vendor relationship. Buyers do not stand up a parallel fraud-detection stack — they upgrade an existing one. Second, the flag operates pre-CRM, which means the buyer never pays the downstream costs of the bot lead: dialer minutes, agent time, CRM record, marketing-automation drip, retargeting pixel. The cost avoidance compounds across the lead’s full lifecycle, not just the lead price itself.

For buyers running a competing or complementary stack — Jornaya LeadiD, internal phone validation, identity-graph matching — the practical question is layering rather than replacement. The TrustedForm vs. Jornaya comparison maps the platform-level differences; with the January 2026 ActiveProspect acquisition of Verisk Marketing Solutions folding Jornaya and Infutor into the same parent, the layering question is becoming a single-vendor question. Buyers who run both certificate platforms today are increasingly running both inside the same pricing relationship.

The Affiliate-Leak Audit Playbook

The 25% headline rate is an industry average. The operating reality is bimodal: clean affiliates running below 5% bot rate sit alongside compromised feeds running 40% or higher, often inside the same network. The audit that surfaces this distribution is sub-ID-level analysis, and it is the only reliable detection layer at the network boundary.

Affiliate networks layer publishers behind sub-IDs, sub-affiliates, and sub-networks. A single fraudster operating under multiple sub-IDs inside a sub-network can route bot traffic into a buyer’s pipeline while the network’s aggregate metrics report acceptable averages. The Affiliate and Partner Marketing Association notes that subnetwork transparency data is largely self-reported, with no independent audit of vetting claims. Sub-ID transparency is the audit primitive — without it, the buyer cannot reach the actual publisher.

The mechanics of the audit are straightforward and inexpensive once the bot-flag signal is instrumented. The buyer enables TrustedForm Bot Detection or an equivalent certificate-level signal across all incoming feeds, captures one full traffic cycle (typically 14 to 28 days for a stable feed mix), and segments the bot-flag rate by sub-ID, source URL, campaign, and creative. The output is a sub-ID ranking — a kill list of publishers whose flag rate exceeds the buyer’s tolerance threshold.

Several specific patterns recur in these audits. Feeds that route through sub-affiliate sub-networks show flag-rate variance an order of magnitude higher than feeds with direct publisher relationships, because the sub-network layer obscures the actual traffic source. Feeds running on incentivized traffic — gift card offers, sweepstakes, points programs — show low bot-flag rates but elevated downstream non-conversion, indicating human fraud farms rather than automation. Feeds tied to volatile creative — frequent rotation, aggressive offer promises, low CPL caps — correlate with elevated bot rates because the creative selection pressure rewards velocity over quality.

The audit framework below maps the four primary inputs to the diagnostic question each one answers and the action that resolves a failing reading. Operators running the framework typically find that 15% to 35% of their sub-IDs account for 60% to 80% of their bot-flag volume — a Pareto distribution that makes the kill list short and the cleanup tractable.

| Audit input | Diagnostic question | Action when failing |

|---|---|---|

| Bot-flag rate by sub-ID | Which publishers are leaking automation? | Pause sub-ID; renegotiate with parent network |

| Time-on-form by sub-ID | Which publishers compress completion? | Investigate creative; demand publisher details |

| Conversion-to-quote by sub-ID | Which publishers produce zero downstream value? | Cross-check against bot rate; flag fraud farm |

| Sub-network composition | Is the publisher behind a sub-network curtain? | Demand transparency; enforce direct-publisher rule |

The audit does not require the buyer to drop the network. It requires the buyer to drop the sub-IDs. Networks that resist sub-ID transparency or refuse to terminate compromised sub-affiliates are themselves the audit failure — and the kill list extends one level up. ActiveProspect’s broader Vendor Reports and Optimization Hub releases through 2025 are designed to expose vendor-level visibility precisely because networks will not self-police at the sub-ID layer without buyer-side instrumentation forcing the issue.

The companion to this audit is post-submission validation against phone, email, and address signals — bot detection catches the automation layer, but human fraud farms produce real-looking submissions that defeat behavioral entropy checks. Stacking certificate-level bot detection with phone-line-type validation and identity-graph matching catches both populations. The insurance lead fraud patterns analysis documents how solar, auto insurance, and Medicare verticals see compounded fraud rates because high payouts attract both automation and fraud-farm capacity at the same affiliate boundaries.

Switching-Cost Math: Detection vs. Affiliate Drop

Once the audit produces a sub-ID kill list, the buyer faces a real decision: pay for detection across all feeds, or drop contaminated affiliates and rebuild volume from clean publishers. The math is sensitive to four numbers, and most buyers run it incorrectly because they price the detection fee against the lead price rather than against the recoverable fraud spend.

A worked example with realistic numbers. A buyer purchases 10,000 leads per month at $25 per lead — $250,000 monthly lead spend. The audit identifies a 25% bot rate concentrated in three sub-IDs that together produce 4,000 leads per month, with bot rates of 60%, 45%, and 35%. The other 6,000 leads come from clean publishers running below 5% bot rate. Total fraudulent spend across the 10,000 leads is approximately $62,500 per month, weighted by sub-ID.

Path 1: Detection across all feeds. TrustedForm Bot Detection priced inside an upgrade tier — assume $0.20 per certificate as a midpoint of the disclosed range — costs $2,000 per month against 10,000 certificates. The detection layer rejects bot leads pre-CRM, recovering $62,500 in fraud spend (lead fees on rejected leads typically refund per network terms). Net monthly recovery: approximately $60,500, before TCPA exposure reduction. Payback ratio: 30x.

Path 2: Drop the three sub-IDs entirely. The buyer terminates the contaminated affiliates and loses 4,000 leads per month — but those 4,000 contained 1,580 real leads (the non-bot fraction across the three sub-IDs at 60%, 45%, and 35% bot rates). The buyer must replace 1,580 real leads from new publisher relationships, which typically takes 30 to 90 days at 10% to 25% higher CPL during ramp. The replacement cost is $5,000 to $15,000 above baseline during the rebuild window, and the buyer loses the 1,580 real leads in the interim — at $25 each, that is $39,500 per month of lost legitimate volume.

Path 3: Detection plus selective sub-ID drop. The buyer runs detection across all feeds (catching residual bots from clean publishers) and pauses only the worst sub-ID at 60% bot rate while renegotiating with the other two. Detection cost stays at $2,000 per month. Lead-spend recovery from the dropped sub-ID approximates $20,000 per month. Detection-driven rejection on the remaining feeds recovers another $30,000 per month. The buyer keeps the 1,000 real leads from the worst sub-ID by replacing them at the margin from clean publishers. Net monthly recovery: approximately $48,000 with no rebuild risk.

| Path | Monthly cost | Monthly recovery | Net | Risk |

|---|---|---|---|---|

| Detection across all feeds | $2,000 | $62,500 | +$60,500 | Low — vendor-managed |

| Drop three sub-IDs | $0 | $30,000 (after rebuild loss) | +$30,000 | High — 30-90 day rebuild |

| Detection + selective drop | $2,000 | $50,000 | +$48,000 | Medium — partial rebuild |

The math almost always favors detection-first, because the detection fee is small relative to recoverable fraud spend and the rejection signal is reversible. Affiliate drops are not reversible — once a network is terminated, the relationship and the clean traffic inside it are both gone for a quarter or more. The right sequencing is detection first, audit second, selective drop on the residual worst offenders. Detection is the instrumentation; the affiliate drop is the surgical action that detection makes possible.

This sequencing is also what protects the TCPA defense narrative. A buyer who runs detection and acts on flags has documented affirmative diligence — every certificate either cleared the bot signal or was rejected. A buyer who drops affiliates without detection has reduced fraud volume but cannot prove which leads were bot-driven and which were legitimate, leaving the historical exposure intact for the TCPA statute of limitations. The detection record is itself a defense artifact, separate from its operational value.

The Human Fraud Farm Escalation

Bot detection is winning against the automation layer, which is why the fraud capacity is migrating to the next layer up. Human fraud farms — large groups of low-wage workers in Bangladesh, the Philippines, India, China, and Indonesia who manually complete forms for cents per submission — defeat every signal in the bot detection taxonomy. They use real devices, real browsers, real residential IPs, real CAPTCHA solves with realistic timing, real mouse trajectories, real typing cadence. The behavioral entropy checks read clean because the entropy is genuine.

The economics make the migration inevitable. A bot operation pays approximately $0.001 to $0.003 per CAPTCHA solve and produces submissions at machine speed; a human fraud farm pays approximately $0.05 to $0.50 per completed form depending on complexity and produces submissions at human speed. The cost differential is two orders of magnitude — but human fraud farms produce submissions that pass certificate-level bot detection, where bot operations increasingly fail. As detection raises the cost of automation, the cost differential closes from the buyer’s perspective: a bot lead that gets rejected costs the fraud operator the full lead price; a fraud-farm lead that passes detection collects the full lead price.

The detection layer that catches human fraud farms is not behavioral. Fraud-farm submissions cluster on:

- Residential IPs in low-cost geographies — Fraudlogix’s 2026 country rankings put Nigeria at 61.37% IVT, Uzbekistan at 61.07%, Bangladesh at 60.01%, with similar concentration in Southeast Asia

- Real but disposable contact data — phone numbers tied to short-lived prepaid SIMs, email addresses on disposable domains, addresses assembled from stolen identity fragments

- Zero downstream conversion — the submission completes, the certificate validates, the consent appears legitimate, and the lead never converts because the submitter has no consumer intent

Catching human fraud farms requires post-submission validation against real-time phone, email, and identity signals, phone validation API stacks, and identity-graph matching. The Verisk acquisition pulled Infutor’s identity-resolution capabilities into the ActiveProspect stack precisely because the next-layer detection requires identity-graph data the company did not previously control. The 2026 product roadmap is reading toward identity-layer fraud detection as the expected next discriminator.

For operators, the implication is that bot detection is necessary but not sufficient. The 25% baseline is the bot floor — a fraud-farm overlay can push the real fraud rate above 35% in high-payout verticals like solar and Medicare, with the fraud-farm fraction looking entirely human at the certificate layer. The combined audit framework — certificate-level bot detection plus identity-graph and conversion validation — is the operating posture that survives the migration.

A 30-Day Operator Audit That Produces a Decision

Most buyers do not have a current measurement of their bot rate. The audit framework below produces one in 30 days, with no infrastructure beyond TrustedForm Bot Detection or an equivalent certificate-level signal, and outputs a sub-ID kill list and a switching-cost decision at the end of the window.

Week 1: Instrument. Enable TrustedForm Bot Detection across all incoming TrustedForm feeds (or stand up an equivalent certificate-level signal — Jornaya LeadiD’s behavioral analytics layer, third-party fraud detection vendors that integrate at form-submit). Confirm flag delivery to the lead-management platform with sub-ID, source, and campaign attribution preserved. Configure rejection or quarantine logic for high-confidence bot flags. Document the baseline rejection rate before the signal is active to establish a clean comparison point.

Week 2: Capture. Run a full traffic cycle with the signal active. For most buyers this is 7 to 14 days of standard volume; buyers with weekly creative rotation or seasonal pricing should extend to capture a full cycle. Resist the urge to act on the data mid-cycle — pausing a sub-ID before the cycle completes biases the sample. Capture the certificate-level metadata (time-on-form distribution, device fingerprint hashes, IP attribution) for post-cycle analysis even if bot-flag rate is the headline output.

Week 3: Segment. Produce the sub-ID-level breakdown. Bot-flag rate, time-on-form distribution, device-fingerprint diversity, and conversion-to-quote rate, all segmented by sub-ID, source, and campaign. Rank sub-IDs by flag rate and identify the Pareto distribution — typically the worst 15% to 35% of sub-IDs account for 60% to 80% of flagged volume. Cross-reference flagged volume against downstream conversion to identify human-fraud-farm overlay (low flag rate plus zero conversion). The output is a ranked kill list and a residual-fraud estimate for the unflagged remainder.

Week 4: Decide. Run the switching-cost math against the sub-ID rankings. For most buyers the decision is detection-first, selective sub-ID drop second, with the worst 1 to 3 sub-IDs paused or terminated and the remainder retained under detection. Document the historical bot-flag rate as a defense artifact for the TCPA statute-of-limitations window. Configure ongoing monitoring — the sub-ID distribution shifts as fraud capacity migrates between feeds, so the audit becomes a quarterly recurring exercise rather than a one-time event.

The audit answers three questions that buyers cannot reach without instrumentation. First, what is the actual bot rate across the buyer’s specific traffic mix — typically a number bracketing the 25% Anura baseline, sometimes well above it in high-payout verticals. Second, which sub-IDs are leaking — almost always a short list, even in feeds running 40%+ aggregate flag rates. Third, what is the right operating posture — detection alone for clean feeds, detection plus drop for compromised sub-IDs, full network exit only when the network refuses sub-ID transparency.

The 30-day window produces enough signal to act. Buyers who delay because they want a “complete” picture are paying the bot tax monthly while they wait for an analysis that will never look more complete than the 30-day output, because the underlying fraud capacity migrates faster than retrospective analysis can keep up.

Key Takeaways

-

The 25% bot rate in lead form traffic is the industry baseline, not a vertical anomaly. Anura’s 2025 Global Ad Fraud Report quantifies it across the digital lead ecosystem; Fraudlogix’s 2026 baseline confirms a 20.64% IVT rate across 105.7 billion impressions. Operators who do not instrument detection are paying the tax without measuring it, and high-payout verticals routinely exceed the baseline because fraud capacity follows payout density.

-

Fabricated consent is the worst legal state, not the best. A TrustedForm certificate that documents a bot’s keystrokes carries the same TCPA exposure as no certificate at all, and plaintiff firms have begun using form-session metadata to argue the buyer should have known. The certificate becomes evidence of negligent process unless detection is layered on top.

-

TrustedForm Bot Detection, launched December 2, 2025, is the certificate-level signal that turns bot rate into a measurable line on the P&L. ActiveProspect was flagging 150,000+ leads per week as bots at launch; the company quantified annual revenue lost to bot leads across its customer base at over $1.4 billion. The product runs as an upgrade inside TrustedForm Insights with pricing negotiated against monthly certificate volume.

-

The six bot detection signals that discriminate are time-on-form distribution, IP velocity, device clustering, behavioral entropy, CAPTCHA bypass patterns, and execution-environment fingerprints. No single signal is decisive; the discrimination value scales with the number of independent signals stacked because bots that defeat one signal frequently fail another.

-

Sub-ID-level audit is the only reliable affiliate detection layer. Networks aggregate clean and compromised publishers behind sub-IDs and sub-networks; aggregate metrics obscure the bimodal distribution. The Pareto pattern is consistent: 15% to 35% of sub-IDs account for 60% to 80% of bot volume, which makes the kill list short and the cleanup tractable.

-

Switching-cost math favors detection first, selective sub-ID drop second. Detection priced in the $0.10 to $0.50 per certificate range produces 12x to 62x payback against recoverable fraud spend before any TCPA exposure reduction. Affiliate drops are not reversible and lose clean traffic mixed inside contaminated feeds; detection is reversible and instruments the targeted action.

-

Human fraud farms are the next layer. As bot detection raises the cost of automation, fraud capacity migrates to manual form-completion farms in low-cost geographies, which defeat every behavioral signal because the entropy is genuine. The next detection layer is identity-graph and conversion validation, which is why ActiveProspect’s January 2026 acquisition of Verisk Marketing Solutions folded Infutor’s identity resolution into the same platform.

-

A 30-day operator audit produces actionable sub-ID rankings without infrastructure beyond the bot signal itself. Week 1 instruments, Week 2 captures, Week 3 segments, Week 4 decides. Buyers who delay because they want a complete picture are paying the bot tax monthly while waiting for an analysis that will never look more complete than the 30-day output.

-

Detection is also a defense artifact. A buyer who runs bot detection and acts on flags has documented affirmative diligence inside the TCPA exposure window. The detection record sits alongside the certificate as evidence of process, and is increasingly necessary to keep the certificate functioning as the consent-defense layer it was designed to be.

Sources

- Anura Solutions, “Global Ad Fraud Report: An Insider’s Look at Invalid Traffic,” May 20, 2025. https://www.anura.io/media/anura-launches-global-ad-fraud-report

- ActiveProspect, “Now live: ActiveProspect’s Bot Detection,” December 2, 2025. https://activeprospect.com/blog/trustedform-bot-detection/

- ActiveProspect, “Designing a better lead ecosystem for 2026,” February 3, 2026. https://activeprospect.com/blog/better-lead-ecosystem-2026/

- ActiveProspect, “Understanding fraud and bot detection technology,” 2025. https://activeprospect.com/blog/bot-detection-technology/

- ActiveProspect, “Access to leads’ data with TrustedForm Insights — Bot Detection.” https://activeprospect.com/trustedform/insights/bot-detection/

- Fraudlogix, “Ad Fraud Statistics 2026: 20.64% IVT Rate,” 2026. https://www.fraudlogix.com/stats/ad-fraud-statistics-2026

- Fraudlogix, “Lead Generation Scams: 10 Schemes to Watch For in 2026.” https://www.fraudlogix.com/affiliate-blog/the-top-10-lead-generation-fraud-schemes-to-watch-out-for/

- U.S. Federal Communications Commission, “Telephone Consumer Protection Act, 47 CFR 64.1200.” https://www.ecfr.gov/current/title-47/chapter-I/subchapter-B/part-64/subpart-L/section-64.1200

- U.S. Code, “Restrictions on use of telephone equipment, 47 U.S.C. § 227.” https://uscode.house.gov/view.xhtml?req=granuleid:USC-prelim-title47-section227&num=0&edition=prelim

- PR Newswire, “Anura Launches Groundbreaking Global Ad Fraud Report, Forecasting $200 Billion in Losses by the End of 2028,” May 20, 2025. https://www.prnewswire.com/news-releases/anura-launches-groundbreaking-global-ad-fraud-report-forecasting-200-billion-in-losses-by-the-end-of-2028-302459767.html

- ActiveProspect, “TCPA class action lawsuits explode in 2025.” https://activeprospect.com/blog/tcpa-lawsuits-updates/

- WebRecon, “TCPA Litigation Statistics 2025.”

Closing

The 25% bot rate is what happens when an industry treats consent as a checkbox and certificates as proof. The December 2025 TrustedForm Bot Detection launch and the January 2026 Verisk acquisition signal that the platform layer is finally rebuilding the consent stack around the human, not around the form session. Operators who instrument the bot signal across their feeds, run a 30-day sub-ID audit, and act on the kill list will compound the recoverable fraud spend, the TCPA defense posture, and the conversion economics simultaneously. Operators who continue to treat the certificate as the answer will discover that fabricated consent is the most expensive line item in lead generation that no one is currently measuring — and that the plaintiff firms are measuring it on their behalf.