Returns do not arrive as a single catastrophe. They compound quietly – a percentage point here, a bad source there – until the math breaks and the operation is losing money on every lead it ships.

The standard response is reactive: review the returns dashboard, dispute the ones that seem unfair, issue credits on the rest, and move on. This approach mistakes the symptom for the problem. By the time a lead is returned, money has already changed hands in both directions. The supplier has been paid. Revenue has been reversed. Processing labor has been consumed. The buyer relationship has taken a small hit that accumulates over time.

Pre-delivery validation operates on different logic. Catching a bad lead before delivery costs the acquisition price and nothing more. Catching it after delivery costs the acquisition price, the processing time, the credit, and a fraction of the trust that took months to build. The economics favor prevention at nearly every price point above $20 per lead.

This playbook covers the validation stack that reduces returns before they happen – not the industry benchmarks (those are covered elsewhere), not the contract terms (those belong in a different conversation), but the operational mechanics of building a system that ships fewer bad leads in the first place.

Why Returns Happen: Root Cause Before Remediation

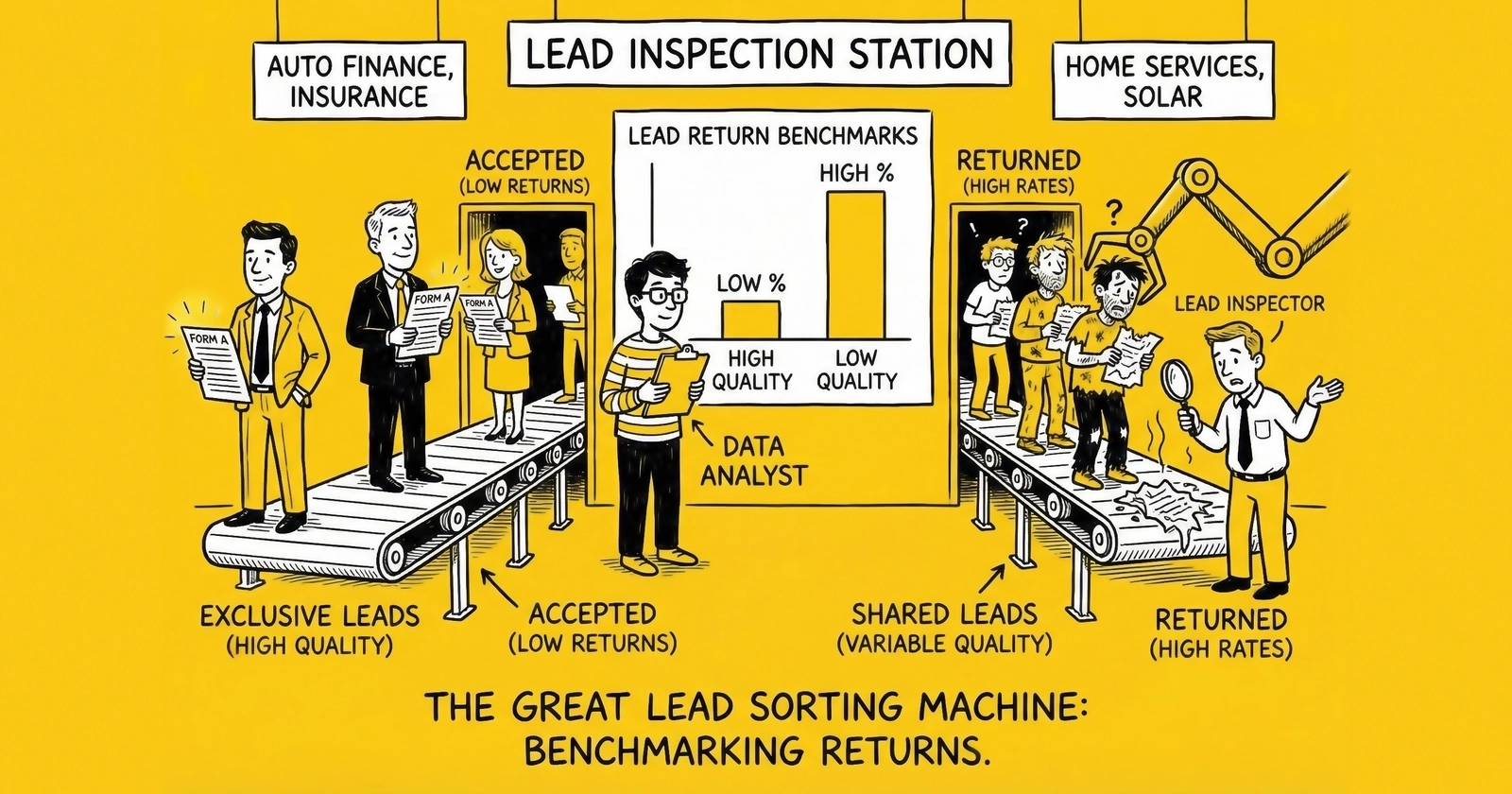

Building an effective pre-delivery stack requires understanding which failure modes drive your returns. The distribution shifts by vertical and source type, but four categories account for the vast majority.

Contact failure causes 30–40% of returns across most verticals. The buyer cannot reach the consumer. This breaks down into disconnected numbers, wrong numbers where someone else answers, and unreachable numbers where legitimate consumers never pick up. Phone verification addresses the first two categories directly. The third is harder to solve at the validation layer, though it is better approached through buyer expectation alignment than technical filtering.

Qualification mismatch drives 25–35% of returns. The lead does not meet the buyer’s stated criteria. Geographic filters were wrong. The consumer’s stated situation – homeownership, credit range, vehicle ownership – does not hold up when the buyer contacts them. Intent was browsing rather than active shopping. These mismatches reflect a failure somewhere between the consumer’s actual situation and what was submitted, which validation systems can address with identity and attribute verification.

Duplicates account for 15–20% of returns in typical operations. The buyer already has this consumer from a prior purchase. This breaks into same-window duplicates (submission within hours), rolling duplicates (days to weeks), and cross-source duplicates (same consumer from a different vendor). Most distribution platforms catch same-window duplicates automatically. Rolling and cross-source duplicates require active scrubbing against buyer-provided suppression files – a step many operators skip because it requires operational coordination with each buyer.

Fraud and incentivized traffic generates 10–20% of returns depending on source quality. Bot submissions, survey site completers, sweepstakes entries, and form submissions from fake or incentivized identities all produce leads that fail immediately on contact. Fraud detection layers address these before delivery.

Each category requires different technical interventions. A validation stack that addresses only phone verification – the most common single-layer approach – leaves qualification mismatches, duplicates, and fraud largely unaddressed.

Layer One: Real-Time Phone Verification

Phone verification is the highest-leverage single validation step for most verticals. Contact failure is the leading return reason, and phone verification directly intercepts the most common failure modes within that category.

What Phone Verification Catches

Line disconnection is the clearest case. A number that has been disconnected cannot reach the consumer. No amount of call attempts, voicemail messages, or follow-up sequences recovers a disconnected number. Verification APIs check line status in real time – disconnected numbers fail immediately.

Number type mismatch matters for compliance as much as quality. Wireless versus landline distinction affects TCPA calling requirements and contact rate expectations. VoIP numbers have different reachability profiles than mobile numbers. Knowing the line type before delivery allows for routing decisions that match buyer capabilities and legal requirements.

Carrier and geographic alignment catches numbers that do not match the consumer’s stated location. A phone number registered to a carrier in Florida while the consumer claims to be in Oregon is a data quality signal worth flagging, not necessarily a disqualifier, but it should feed into a broader quality score.

Name-to-number reverse lookup confirms that the number is associated with the name provided. Mismatches between submitted name and the number’s registered identity suggest either data entry errors or intentional misrepresentation. For higher-value leads where identity matters, this lookup adds meaningful signal.

Verification Implementation Options

Three approaches exist, each with different trade-offs:

API-based real-time verification integrates with services like Twilio Lookup, Ekata (now Mastercard), or Numverify. The API call happens when the consumer submits the form. A failed check triggers a field validation error asking the consumer to review their number, or routes the lead to a lower-quality tier rather than blocking it outright. Response times run under 200ms for major providers, making real-time gate logic practical for web forms.

IVR ping verification goes further: after form submission, the system dials the provided number and asks the consumer to press a digit confirming their request. This approach drops conversion rates 10–25% because some consumers will not complete the call. In exchange, it eliminates contact failure returns almost entirely for the leads that pass. The economics favor IVR verification for leads priced above $40–50 where a 25% volume reduction is offset by the return rate improvement.

SMS confirmation is the middle path. After form submission, the consumer receives a text message asking them to reply YES to confirm their inquiry. Click-to-confirm rates run 60–75% for well-written confirmation messages. Leads that do not confirm within 5–10 minutes can be downgraded to aged status or routed to buyers with lower quality thresholds. This approach provides significant return reduction with a smaller volume impact than IVR.

Verification Cost Reality

Phone verification adds cost. The question is whether the cost is justified.

Verification API calls run $0.002–0.01 per lookup for basic line type and connectivity checks. Full identity verification with name-to-number matching runs $0.05–0.15 per call. IVR confirmation costs $0.02–0.05 in carrier charges plus API fees.

At a $40 lead price with a 15% return rate, verification that reduces returns to 8% saves $2.80 per lead in refunds plus avoided processing labor. The breakeven on $0.10 verification costs occurs when verification reduces returns by roughly 0.25 percentage points – a threshold most implementations clear by a wide margin.

Layer Two: Email Verification and Hygiene

Email addresses are less central to contact success in verticals where phone outreach dominates, but they serve multiple validation purposes that affect return rates in ways that are easy to overlook.

What Email Verification Catches

Syntax and deliverability checks are the baseline. A malformed email address – missing the @ symbol, invalid domain extension, typos – indicates either inattentive form completion or deliberate falsification. Neither produces a quality lead. Syntax validation runs server-side during form submission and costs effectively nothing in computation.

Domain existence verification goes further. An email at a domain that does not exist (or no longer exists) is not deliverable and typically signals a fake submission. Real-time MX record lookups verify that the domain accepts email. This catches common fake domains (gmail.con, yaho.com, etc.) that pass syntax validation.

Mailbox existence at the deliverability level – confirming that the specific address exists at a valid domain – catches fake addresses at real domains. A consumer who enters john.doe@gmail.com but with a non-existent mailbox is providing a contact address that bounces. Services like ZeroBounce, NeverBounce, and Hunter.io handle this at $0.005–0.02 per verification.

Disposable and temporary email detection identifies addresses from services like Mailinator, Guerrilla Mail, and similar providers. These are single-use addresses created specifically to complete web forms without providing real contact information. Any lead with a disposable email address has low contact probability regardless of the phone number provided.

Role account detection flags addresses like info@, admin@, support@, and similar patterns. These are rarely personal email addresses and almost never belong to the consumer filling out the lead form. A solar lead with the email address info@examplecompany.com warrants scrutiny regardless of the phone number.

Email as Identity Signal

Beyond contact success, email addresses serve as identity anchors. For operations running duplicate detection, email is often more stable than phone number – consumers change phone numbers more frequently than email addresses. A hashed email match against a buyer’s suppression file is a more reliable duplicate signal than a phone match alone.

Email also feeds into broader fraud signals. A disposable email combined with a VoIP phone number and a browser environment that looks automated is a different risk profile than a established email domain combined with a mobile carrier number submitted from a residential IP address. Email verification data integrates with fraud scoring layers to produce a more complete picture.

Layer Three: Duplicate Detection Before Delivery

Duplicate returns are among the most preventable category, yet most operations address only the easiest cases. Same-window duplicates – a consumer submitting the same form twice within minutes – are caught by every distribution platform. The harder and more expensive duplicates slip through.

The Duplicate Taxonomy

Same-session duplicates submit within minutes of each other, often because the consumer hit the back button, refreshed the confirmation page, or encountered a submission error that caused them to try again. Distribution platform deduplication catches these. False positive risk is minimal.

Rolling window duplicates submit the same consumer days or weeks apart, within the window defined in the buyer’s contract. A 72-hour window is common for real-time leads. Most platform-level deduplication covers this window, but the coverage depends on how the platform stores and checks its deduplication database. Test your platform’s actual behavior – do not assume it works as documented.

Cross-source duplicates are the expensive gap. A consumer who submitted through your Google Ads traffic last week may also have submitted through an affiliate’s Facebook campaign. Both leads end up with the same buyer. One gets returned as a duplicate even though neither lead source violated any rules. The buyer has a legitimate return claim; the seller has no way to know unless they checked against the buyer’s suppression file.

Re-submission duplicates occur when consumers who previously submitted through your operation return weeks or months later. Policies on re-submission timing vary by buyer and vertical – some buyers accept re-submissions after 30 days, others after 90. Knowing which buyers accept re-submissions and at what intervals requires active management.

Implementing Suppression File Scrubbing

Cross-source and re-submission duplicate prevention requires buyers to share suppression files. This is operationally more complex than single-source deduplication, but it is the step that actually reduces returns in this category.

Suppression file mechanics: The buyer provides a hashed file of phone numbers, email addresses, or both for consumers currently in their system. You check each incoming lead against the file before delivery. Matches get filtered or flagged.

Hashing for privacy: Suppression files should use hashed identifiers – SHA-256 or similar – rather than raw contact data. The buyer hashes their suppression list before sharing. You hash incoming leads before comparison. Neither party exposes actual consumer contact data. This approach satisfies reasonable data privacy requirements while enabling meaningful duplicate detection.

Update cadence: A suppression file shared once and never updated provides diminishing value as buyers add new leads. Establish a cadence – weekly minimum, daily preferred for high-volume relationships – for suppression file updates. Automate the exchange if volume justifies it; API-based suppression list sharing is available through most distribution platforms and through ActiveProspect’s SuppressionList product.

Coverage decisions: Not every buyer will share suppression files. Some are protective of their data; others lack the technical infrastructure to generate one. For buyers who will not share, the only protection against cross-source duplicates is contractual – clear return policy language that specifies how cross-source duplicates are handled and who bears the cost.

Layer Four: Consent Freshness Checks

Consent validity operates on a timeline. A consumer who provided express written consent to receive calls and texts at 2pm yesterday has different regulatory standing than one whose consent was captured 91 days ago on a form that no longer exists.

The practical return implications of consent staleness are less direct than phone or duplicate issues – buyers rarely return leads citing consent staleness rather than contact failure or qualification mismatch. But consent staleness is a quality signal that predicts other problems: consumers who submitted long ago have lower current intent, higher likelihood of not remembering the form submission, and higher denial rates when contacted.

What Consent Freshness Measures

Time since submission: Most buyers price leads based on age. Leads submitted within the past hour are worth more than leads submitted six hours ago, which are worth more than yesterday’s leads. For real-time lead programs, delivering a lead that is hours old when your agreement specifies real-time delivery creates a quality dispute independent of any contact outcome.

Consent document availability: For calls covered by TCPA requirements, the consent certificate must remain retrievable. TrustedForm and Jornaya’s TCPA Guardian generate certificates at submission. Certificates that have expired or whose backing URLs are no longer accessible leave the seller unable to substantiate the consent claim if a buyer disputes or a compliance issue arises.

Form version validity: Consent language on the form at the time of submission matters. If a buyer’s consent language has been updated since the consumer submitted, the consent captured may not satisfy the buyer’s current standard. Tracking form version alongside certificate ID allows operators to identify leads whose consent was captured against outdated language.

Implementing Consent Verification

TrustedForm certificate verification: Before delivering a lead, verify that the TrustedForm certificate ID embedded in the lead record is retrievable and has not expired. TrustedForm’s API returns certificate status and metadata including the certificate age, the page URL, and the consent language present at submission. Leads with expired certificates or inaccessible certificate pages should not be delivered to buyers who require documentation.

Timestamp validation: Build timestamp checks into your delivery workflow. If a buyer has specified a maximum lead age of 30 minutes for real-time pricing, enforce that threshold before delivery. Leads that age out of the real-time window should route to aged buyers at adjusted pricing rather than shipping at full price and inviting returns.

Consent language auditing: For buyers with specific consent language requirements, capture and compare the consent language at submission time against the buyer’s current requirements. This is more infrastructure than most operators build for individual buyers, but for high-volume relationships with buyers who have strict consent standards, it prevents disputes downstream.

Layer Five: Buyer-Specific Quality Filters

Generic pre-delivery validation catches problems that affect all leads equally. Buyer-specific filters address the reality that different buyers have different quality thresholds, geographic constraints, capacity limits, and qualification requirements that do not map to any universal standard.

Types of Buyer-Specific Filters

Geographic filters: A mortgage lender licensed in 12 states needs to receive only leads from those states. A solar installer serving specific counties within a state will return every lead from outside their service area. Geographic filters at the delivery layer prevent these returns. Most distribution platforms support geographic routing as a standard feature – the issue is whether filter rules are configured with sufficient precision. State-level routing is table stakes. County-level, zip code-level, or radius-based routing requires more configuration but delivers proportionally fewer return claims.

Qualification attribute filters: Beyond geography, buyers have qualification requirements tied to their products. A mortgage buyer may require leads to have a minimum stated credit score of 620. A solar buyer may require homeownership (not rental). A Medicare supplement buyer may require the consumer to be within a specific age band. These requirements should be captured in your buyer configuration and applied as filters before delivery, not as return reasons after the fact.

Capacity and volume controls: A buyer who has hit their daily cap should not receive additional leads regardless of quality. Delivering past capacity creates two problems: the buyer returns the leads as excess (which counts against your return rate), or the buyer’s team cannot work the leads within the response time window that maximizes contact rates, degrading conversion performance that eventually affects their bid. Lead caps should be enforced at the routing layer, not left as a problem for the buyer to manage.

Contact rate thresholds by source: Some buyers have learned through experience that leads from certain source types contact at rates below their operational threshold. A buyer whose call center depends on 45% contact rates cannot profitably work leads from incentivized survey sources that contact at 25%. Configuring source-level routing that respects buyer contact rate requirements reduces returns by preventing the delivery of leads the buyer will never successfully work.

Suppression list integration: Beyond the buyer-shared suppression files described above, buyers may have internal DNC lists, active customer lists (consumers who should not receive sales calls), or prior bad actor lists (consumers who have historically made TCPA claims). Integrating these into pre-delivery filtering requires the buyer to provide and maintain the lists, but it eliminates a meaningful return category.

Building the Filter Configuration System

Buyer-specific filter management gets complicated at scale. Maintaining filter configurations for 20+ buyers across multiple verticals, each with different geographic restrictions, qualification requirements, capacity caps, and suppression lists, requires a system rather than manual configuration.

Distribution platforms like boberdoo, LeadExec (ClickPoint Software), Phonexa, and LeadsPedia support buyer-level filter configuration. The configuration options vary in sophistication – most handle geographic and capacity filters easily, fewer handle dynamic suppression list integration. For platforms that lack native suppression list integration, build the check into your pre-submission workflow before the lead reaches the distribution system.

Document buyer filter requirements in a single reference source. When buyers update their geographic footprint, add a qualification criterion, or change their capacity parameters, update the filter configuration immediately. A disconnect between what a buyer has told you they need and what your filters actually enforce creates a return liability that compounds with every delivery.

The Validation Stack in Practice

Individual validation layers address individual failure modes. The compounding benefit comes from running them together, because a lead that fails multiple checks provides different information than one that fails a single check.

Failure Mode Stacking

A lead with a disconnected phone number is a contact failure. A lead with a disconnected phone number, a disposable email address, and an IP address associated with a datacenter is fraud. A lead with valid contact information that matches a buyer’s suppression file is a duplicate. A lead with valid contact information, no suppression match, but a 91-day-old TrustedForm certificate and a stated credit score below the buyer’s threshold should route to aged buyers rather than ship as real-time qualified inventory.

Building a quality score that aggregates signals from each validation layer – rather than treating each as an independent binary pass/fail – allows for nuanced routing decisions. High-confidence leads route to premium buyers at full price. Leads with one or two warning flags route to buyers with more lenient standards at adjusted pricing. Leads that fail multiple checks get filtered before delivery rather than shipped and returned.

Quality Score Architecture

A simple quality scoring model assigns points based on validation outcomes:

| Validation Signal | Points |

|---|---|

| Phone verified, mobile carrier | 20 |

| Phone verified, landline or VoIP | 10 |

| Phone fails line connectivity check | -30 |

| Email verified, real mailbox | 15 |

| Email disposable or undeliverable | -20 |

| Name-to-phone match confirmed | 10 |

| No match found | 0 |

| Duplicate against buyer suppression file | -50 |

| TrustedForm certificate valid, under 30 min | 15 |

| TrustedForm certificate valid, 30-60 min | 5 |

| Certificate expired or inaccessible | -15 |

| IP address residential | 10 |

| IP address datacenter or proxy | -20 |

| Fraud score from scoring provider: low | 15 |

| Fraud score: medium | 0 |

| Fraud score: high | -30 |

Leads scoring above 60 route to premium buyers as standard inventory. Leads scoring 30–60 route to buyers with adjusted quality standards at reduced prices. Leads below 30 are filtered before delivery.

Calibrate the thresholds against your actual return data. Run a retrospective analysis against your last 90 days of returned leads: what would their quality scores have been if the system had been active? If the threshold catches 80% of returns with 15% false positives (valid leads incorrectly flagged), that is likely better economics than your current return rate.

What Pre-Delivery Validation Cannot Fix

Pre-delivery validation is not a complete solution to the return problem. Understanding its limits prevents over-investment in validation at the expense of source management, buyer communication, and contract clarity.

Intent mismatch is the hardest problem for technical systems to address. A consumer who provided accurate contact information, a genuine identity, and a valid form submission but who was only browsing and has no current purchase intent will pass every validation layer. They will then fail to engage when the buyer contacts them, leading to either a return dispute (the buyer claims the consumer “is not interested,” which the seller disputes as not meeting return criteria) or buyer dissatisfaction that eventually reduces bids and caps. Addressing intent mismatch requires traffic source quality management, not validation improvements.

Buyer-side process failures generate returns that validation cannot prevent. A buyer whose call center is understaffed, whose dialer settings miss the contact window, or whose sales team struggles to convert leads from a specific demographic will return leads at elevated rates regardless of quality. Identifying these patterns requires analysis that looks at return rates by buyer and context, not just by lead characteristics.

Market conditions affect return rates in ways that precede any quality signal. When mortgage rates spike, purchase intent drops rapidly, and leads that were genuine inquiries become stale before the buyer can work them. When an HVAC installation season ends earlier than normal due to weather, leads shipped in the final weeks carry higher rejection risk. These temporal patterns require source and pricing adjustments, not validation improvements.

Building the Operations Process

A validation stack that works technically but is not embedded in operations reverts to patchwork quickly. The following process elements make pre-delivery validation persistent rather than a one-time configuration.

New Source Onboarding Protocol

Every new traffic source should run through a qualification period before receiving standard volume caps and delivery commitments.

Small-batch testing: Accept 10–20 leads from a new source before opening volume. Run these through the full validation stack and track pass rates by validation layer. A source where 30% of leads fail phone verification before delivery is telling you something important.

Return window observation: Track returns across the complete return window before scaling. If your standard return window is 72 hours, do not scale a source until you have 72 hours of return data on at least 50 leads. A source with two visible days of 5% returns that spikes to 20% on day three has a time-delayed quality problem that small-batch testing will surface if you wait for the full window.

Source-level return attribution: Tag every lead with its source at delivery. When returns come in, attribute them back to the originating source. This requires your distribution system to maintain source tracking through the return workflow, which many platforms support but few operators configure.

Ongoing Source Management

Source quality degrades. A traffic source that performed at 8% returns six months ago may be running at 15% today because the publisher changed their audience targeting, loosened their consent practices, or shifted to lower-quality inventory. Source-level return monitoring with automated alerts catches this before the problem becomes structural.

Alert thresholds by source: Configure alerts when any source’s 7-day rolling return rate exceeds 1.5x its 90-day baseline. A source running 8% historically that spikes to 12% over a week warrants investigation – not termination, but understanding. A source that was at 8% and is now at 18% warrants immediate cap reduction.

Pre-delivery failure rate monitoring: Track not just delivered lead returns but pre-delivery validation failure rates by source. A source where 40% of submitted leads fail phone verification is a different quality problem than one where 5% fail. Pre-delivery failure rates can degrade before return rates surface because not all invalid leads get past the validation stack.

Buyer Filter Maintenance

Buyer configurations drift when filter management is reactive rather than systematic.

Quarterly configuration reviews: Schedule quarterly reviews of every active buyer’s filter configuration. Confirm geographic restrictions match current service areas. Verify qualification thresholds match current product requirements. Update capacity parameters to reflect current buyer absorptive capacity.

Change notification protocols: When buyers update their requirements, they do not always think to notify their lead suppliers promptly. Build into buyer relationship management a periodic proactive check: “Have there been any changes to your geographic coverage, qualification requirements, or capacity that I should update in your filter configuration?”

Return reason tagging: Tag returned leads with the stated return reason. Analyze return reasons quarterly by buyer. A buyer returning 40% of leads for “out of service area” with no change in stated geographic requirements suggests a filter configuration error. A buyer returning increasing numbers for “consumer denies intent” suggests a traffic source quality issue. The patterns in return reasons guide the specific remediation.

Frequently Asked Questions

How much does a full pre-delivery validation stack cost per lead?

A complete stack – phone line check, email deliverability, duplicate scrub, TrustedForm verification, IP/device intelligence, and fraud scoring – runs approximately $0.25–0.60 per lead depending on vendors and volume. At $50 per lead price, this represents 0.5–1.2% of revenue. Compare against the cost of a 5-percentage-point return rate improvement at $50 per lead: eliminating five returns per hundred leads saves $2.50 in refunds per lead plus processing labor, a 5–8x return on validation spend.

Does phone verification reduce conversion rates and by how much?

IVR confirmation reduces lead volume by 10–25% because some consumers abandon before completing the confirmation step. Basic API-based line verification with a correction prompt (not a confirmation call) reduces volume by less than 3% while eliminating disconnected and invalid number returns. The right approach depends on lead price and return rate severity. Operations where returns from contact failure represent more than 5% of delivered volume usually find IVR economics favorable above $35 per lead.

How often should suppression files be updated?

For active buyers in real-time lead programs, weekly updates are minimum viable; daily is better. A buyer adding 500 new leads to their system weekly has a suppression file that becomes 500 entries stale between weekly updates. In competitive verticals where consumers shop multiple lead sources within days, stale suppression files produce duplicates that could have been caught. Daily automated suppression file exchange is standard practice for high-volume buyer relationships.

What is the best way to handle leads that fail validation but are not clearly fraudulent?

Route them rather than discard. A lead with a flagged VoIP phone number and a disposable email address is likely low quality but not necessarily fraudulent. Create a quality tier for these leads that routes to buyers with lower price floors and higher return tolerance, or hold them for aged lead buyers who have priced in the quality risk. Discarding pre-delivery failures entirely destroys revenue on leads that might convert; routing them at adjusted prices recovers partial value while protecting your core buyer relationships.

How do buyer-specific filters interact with auction-based distribution systems?

In ping-post and auction systems, buyer filters apply at the ping stage before price is submitted. A lead that does not meet a buyer’s geographic or qualification filters should not generate a bid from that buyer – the system should exclude that buyer from the auction for that specific lead. Most auction-based distribution systems support filter-level bid exclusion. If yours does not, leads are being offered to buyers who will reject them post-acceptance, generating returns that filter configuration should have prevented.

What return rate should trigger a complete validation stack audit?

Any sustained rate above 1.5x vertical benchmark warrants investigation. For auto insurance, that is above 15%. For solar, above 30%. For mortgage, above 22%. If return rates are in benchmark range but margins are still compressed, audit the composition of returns by reason code. Elevated rates in specific categories (fraud, duplicates, contact failure) point to specific validation gaps. Elevated rates in “consumer denies intent” or “not interested” often point to source quality rather than validation failures.

Key Takeaways

Returns caught before delivery cost the acquisition price. Returns caught after delivery cost the acquisition price, the refund, the processing labor, and a piece of the buyer relationship. The economics of pre-delivery validation are compelling at nearly every lead price point above $20.

Phone verification is the highest-leverage single intervention. Contact failure causes 30–40% of returns. API-based phone verification with a correction prompt reduces this category substantially at cost below $0.10 per lead.

Duplicate detection requires buyer cooperation. Same-window duplicates are caught by distribution platforms. Cross-source and re-submission duplicates require suppression file sharing. Operations without active suppression file exchange with buyers are delivering a preventable return category.

Consent freshness predicts intent. Leads with current TrustedForm certificates and timestamps inside the buyer’s acceptable window have higher intent probability and lower denial rates than aged leads. Certificate verification at delivery prevents a quality dispute that consent-conscious buyers will otherwise raise.

Buyer-specific filters eliminate predictable mismatches. Geographic mismatches, qualification failures, and capacity overflows are fully preventable at the routing layer. Returns from these causes reflect filter configuration gaps, not traffic quality problems.

Build quality scoring, not just binary pass/fail. Leads that fail one validation signal are different from leads that fail five. A scoring model that routes based on aggregate quality signals extracts value from the middle tier while protecting premium buyer relationships from low-quality inventory.

Source-level return attribution drives the right interventions. Aggregate return rates hide source-level variation. A blended 12% return rate that contains a 4% source and a 24% source requires different action than a uniform 12% across all sources. Tag every lead, track every return, and attribute every return to its source.

Sources

- TrustedForm Documentation – ActiveProspect’s consent certification platform, certificate verification API, and compliance documentation

- Twilio Lookup API – Phone number intelligence including line type, carrier, and validity checking

- NeverBounce – Email verification service with deliverability, disposable address detection, and syntax validation

- Jornaya TCPA Guardian – Consumer journey documentation and TCPA compliance verification platform

Operational benchmarks reflect patterns across insurance, mortgage, solar, and home services verticals. Validation economics vary by lead price point, vertical, and traffic source mix. Test interventions against your specific return composition before committing to full-stack implementation.